The Kings In Your Castle Part 4: Packers, Crypters and a Pack of RATs

In part 4 of our series “The Kings In Your Castle”, we’re back with the question, what does sophistication even mean? I’ll be outlining what complexity from a malware analyst’s perspective means, why malware intends to be undecipherable and why it sometimes just wouldn’t even try. Also, this blog entry serves to present our findings on commodity RATs within the corpus of malware we analyzed, as part of our talk at Troopers conference in March.

If you are interested in previous parts of the series please check them out here, here and here.

What does sophistication even mean?

The complexity of software is a rather soft metric, that hasn’t undergone much scrutiny in definition. For a malware analyst, this sphere even takes on a whole lot of different shades, as malware by nature aims to hide its threats. At least most of it, as one would think?

For analysts, what poses challenges are techniques such as code obfuscation or the use of well-fortified crypters. What also presents a remarkable challenge is structured application design, although this might sound somewhat counter-intuitive. Multi-component malware with a well thought-out object oriented design and highly dependent components cause more of a headache for an analyst than any crypter out there. In fact, many of the well-known high-profile attack toolsets aren’t protected by a packer at all. The assumption is, that for one, runtime packers potentially catch unwanted attention of security products; but also, for highly targeted attacks they might not even be necessary at all. Malware developers with sufficient dedication are very well able to hide a software’s purpose without the use of a runtime packer. So, why do we still see packed malware?

A software crypter is a piece of technology which obfuscates software and its intentions, but also to changes its appearance. Crypters and packers are frequently applied to malware in order to ensure the reusability of the actual malcode. That way, when malware is detected once, the same detection will not apply to the same malware running on a different system.

Let’s take a step back though. The ‘perfect targeted attack’ is performed with a toolset dedicated to one target only. We can conclude that a crypter is needed if either the authors aren’t capable of writing undetectable malware, or, more likely, if the malware is intended to be reused. This makes sense, if you reconsider, writing malware takes time and money, a custom attack toolset represents an actual (and quite substantial) investment.

Finally, with economical aspects in mind, we can conclude that attacks performed with plain tools are the more expensive ones, while attacks using packers and crypters to protect malware are less resource intensive.

The actual hypothesis we had started our research with was, that most targeted malware comes without a crypter. In more detail, we put up the statement, that targeted malware was significantly less protected than random malicious software. Again, random malware doesn’t know its target and by definition is intended to infect a large number of individuals; while targeted malware supposedly is designed for a limited number of targets.

Packer Detection (Like PEiD Was Broken)™

Now, the usage statistics of runtime packers and crypers would be easy to gather from our respective dataset, if the available state-of-the-art packer detection tools weren’t stuck somewhere in 1997. The question whether a file is packed or not in practice is not trivially answered. One of the tools that is frequently used to identify packers is named PEiD. PEiD applies pre-defined signatures to the first code bytes starting from the executable entry point, which easily fails if the code at the packed binary’s entry point changes only slightly.

Aiming for more accurate results, we decided to come up with our own packer evaluation. We put together a set of indicators for abnormal binary structures, which are frequently seen in relation with runtime packers. Please note, the indicators are just that – indicators. The evaluation algorithm leaves some space for discrepancies. In practice though, manual analysis of randomly picked samples has proven the evaluation to be reasonably precise.

We gathered the following PE attributes:

- Section count smaller than 3

- Count of TLS sections bigger than 0

- No imphash value present, thus import section empty or not parseable

- Entropy value of code section smaller than 6.0 or bigger than 6.7

- Entry point located in section which is not named ‘.text’, ‘.itext’ or ‘.CODE’

- Ratio of Windows API calls to file size smaller than 0.1

Of course, no single one of the gathered attributes is a surefire indicator in a packer evaluation process. In reality, some of the mentioned presumed anomalies are frequently seen within unpacked binaries, and depend, for example, on the executable’s compiler. Nevertheless, any of the mentioned features is reason enough to grow suspicion, and as mentioned before, the evaluation works rather reliably on our chosen dataset.

According to our algorithm the values weigh into an evaluation score, based on the final score an analyst can then draw his conclusion. It is worth noting at this point, that the chosen thresholds are quite sensitive, and one would expect to rather detect too many “potentially-packed” samples, instead of too few.

Further details about our packer evaluation method can be found within the published code.

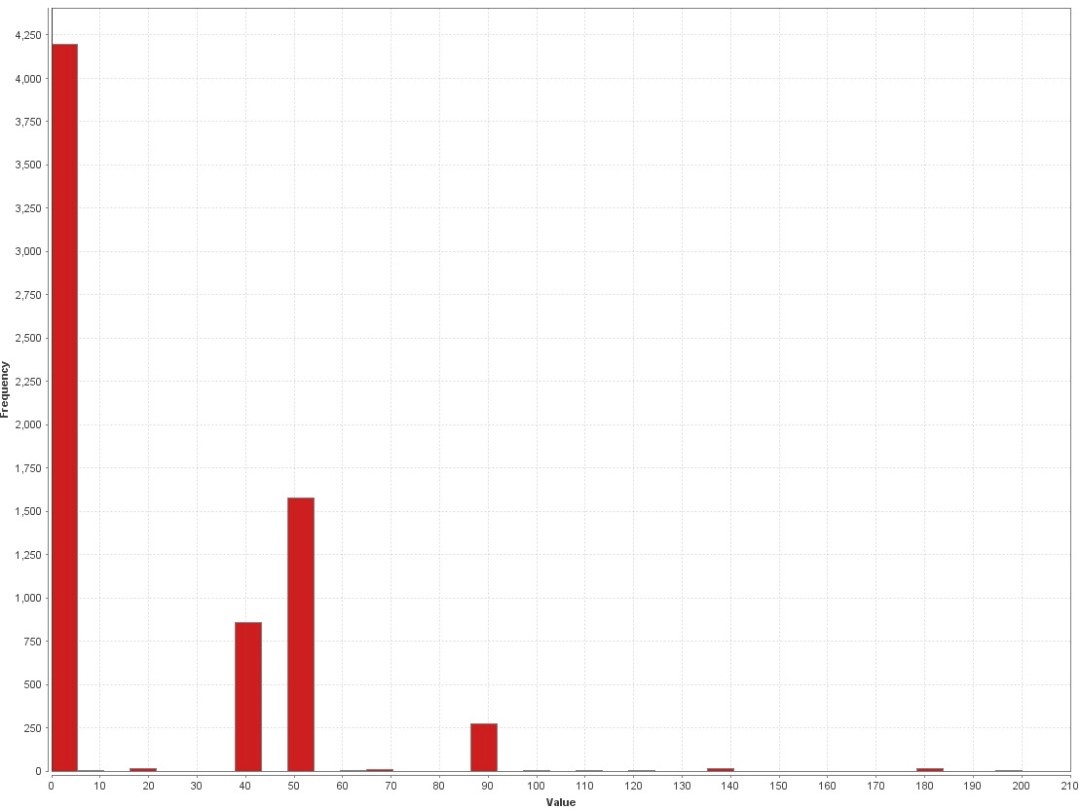

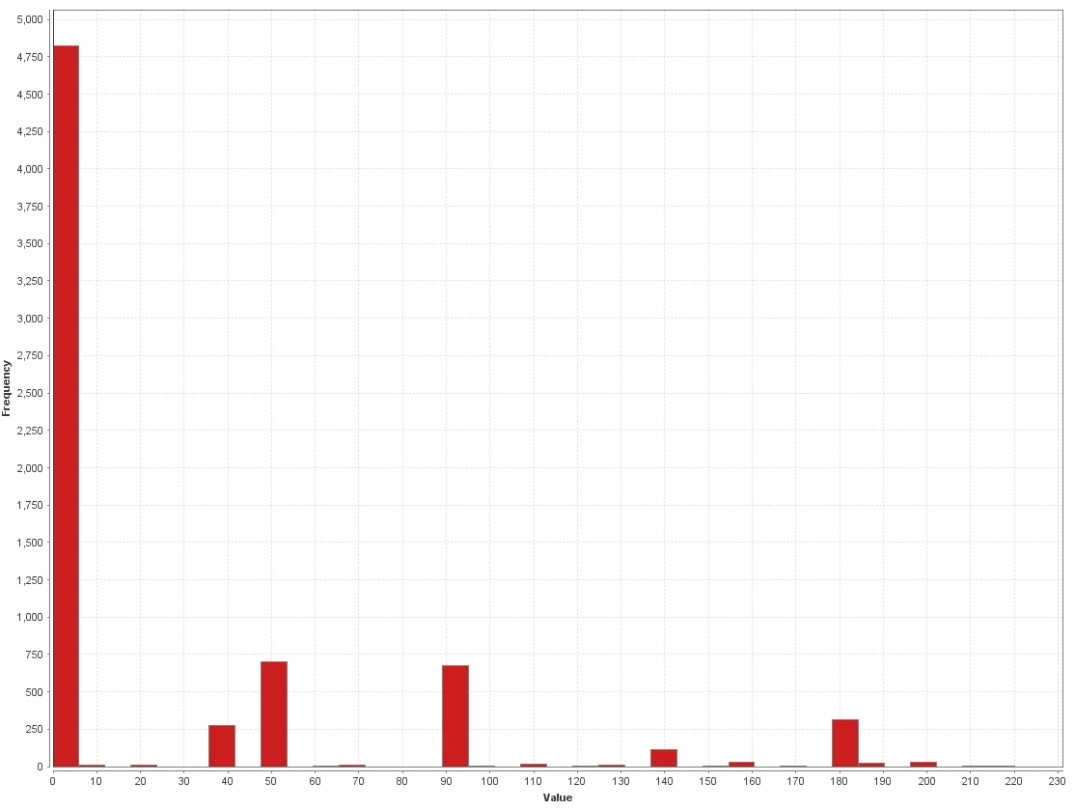

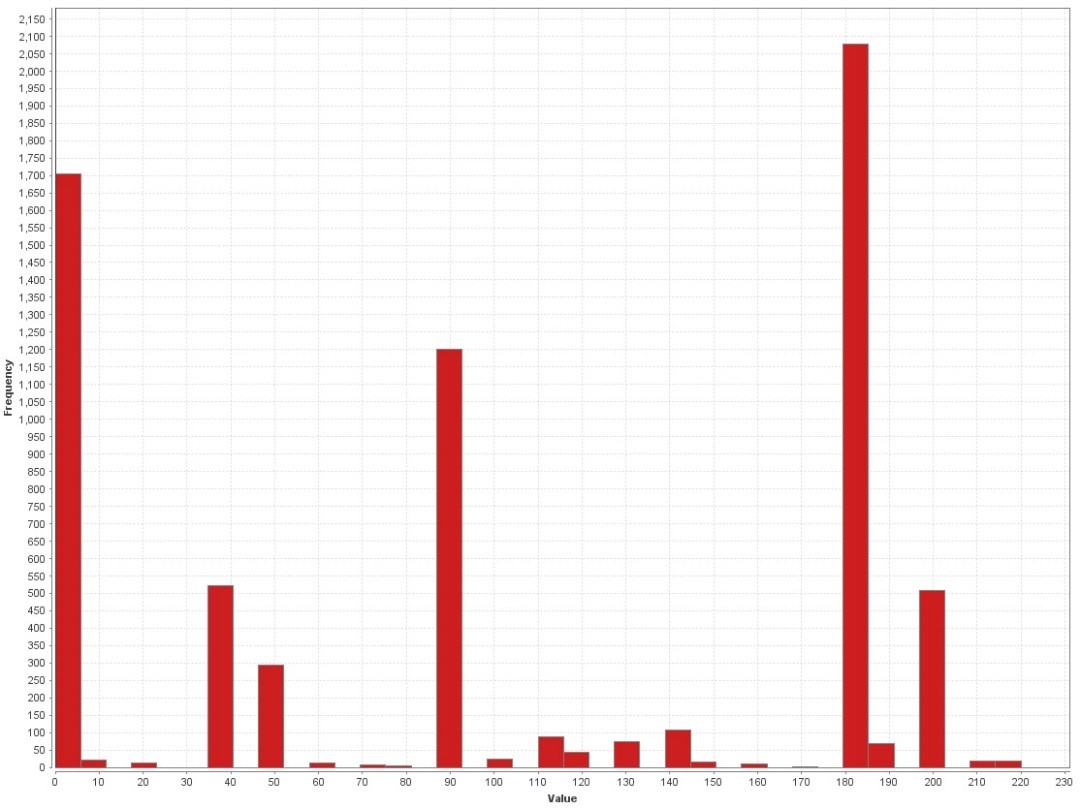

The results can be found in the following charts, showing the evaluation values in relation with sample counts. The maximum score a sample can reach on our scale is 220, meaning that all eval attributes exceed the chosen threshold. The following graphics show the evaluation performed on a benign sample set, on a targeted malware sample set and on a random malware sample set. Attention should be paid to the sample frequency count on the y-pane of the graph.

The graphs show very well, how roughly a third of the benign samples show low rated indicators; while for the random malware sample set, it is less than a third of the overall set showing no indicators, while more than a third of the set show remarkably high rated indicators. It shall be mentioned, that a look into benign samples rated at 40-50 resulted in the finding, that most of them were packed with UPX, a binary packer used mainly for binary compression.

The remarkable bit at this point is that the set of targeted malware binaries has the overall lowest count of packer indicators. This leaves us with two possible conclusions. Following our hypothesis that targeted malware is significantly less protected by crypters than random malware, we take this result as a proof.

On the other hand, what surely biases the result, is that the chosen attributes are potentially influenced by compilers used to compile the given binaries. This means though, as the results for the targeted set are notably homogenous, that the larger part of targeted malware within our dataset has probably not experienced exotic compilers either. As a research idea for future analysis I’d like to put up the somewhat far-fetched hypothesi, that most targeted malware is written in C/C++ and compiled using Visual Studio compiler. Curious, anyone?

Commodity RATs

Taking the question of malware sophistication further, in the past the analysis community was frequently astonished in the light of yet another incredibly complex targeted malware campaign. While it is true that certain targets require a high level of attack sophistication, most campaigns do not require components for proprietary systems or extremely stealthy methods. An interesting case of high profile attacks being carried out with commodity malware was uncovered last year, when CitizenLab published their report about Packrat. Packrat is a South American threat actor, who has been active for around seven years, performing targeted espionage and disinformation campaigns in various South American countries. The actor is most known for targeting, among others, Alberto Nisman, the late Argentinean prosecutor, raising a case against the Argentinean government in 2015.

Whoever is responsible for said campaigns did have a clear image of whom to target. The actor certainly possessed sufficient personal and financial resources, yet made a conscious decision to not invest in a high-end toolchain. Looking into the malware set used by Packrat, one finds a list of so called commodity RATs, off-the-shelf malware used for remote access and espionage. The list of RATs includes CyberGate, XTremeRAT and AlienSpy; those tools themselves are well-known malware families associated with espionage.

Again, using repackaged commodity RATs is notably cheaper than writing custom malware. The key to to remaining stealthy in this case is the usage of crypters and packers. In the end though, by not burning resources on a custom toolchain, the attacker can apply his resources otherwise – potentially even on increasing his target count.

Hunting down a RAT pack

Looking at the above facts, one question emerges: how prevalent are pre-built RATs within the analysis set at all? To establish a count for commodity RATs count we again relied on detection names of Microsoft Defender. The anti-virus solution from Microsoft has shown in the past to be rather slow in picking up new detections, while providing quite accurate results once detections are deployed to the engines. Accuracy at this point includes certain reliability when it comes to naming malware.

For evaluation, we chose to search for the following list of commodity malware:

- DarkComet (Fynloski)

- BlackShades (njRat, Bladabindi)

- Adwind

- PlugX

- PoisonIvy (Poison)

- XTremeRAT (Xtrat)

- Handpicked RAT binaries

The selected set is what we noted seeing when going through the malware corpus manually, please note though, the list of existing commodity RATs is by far longer.

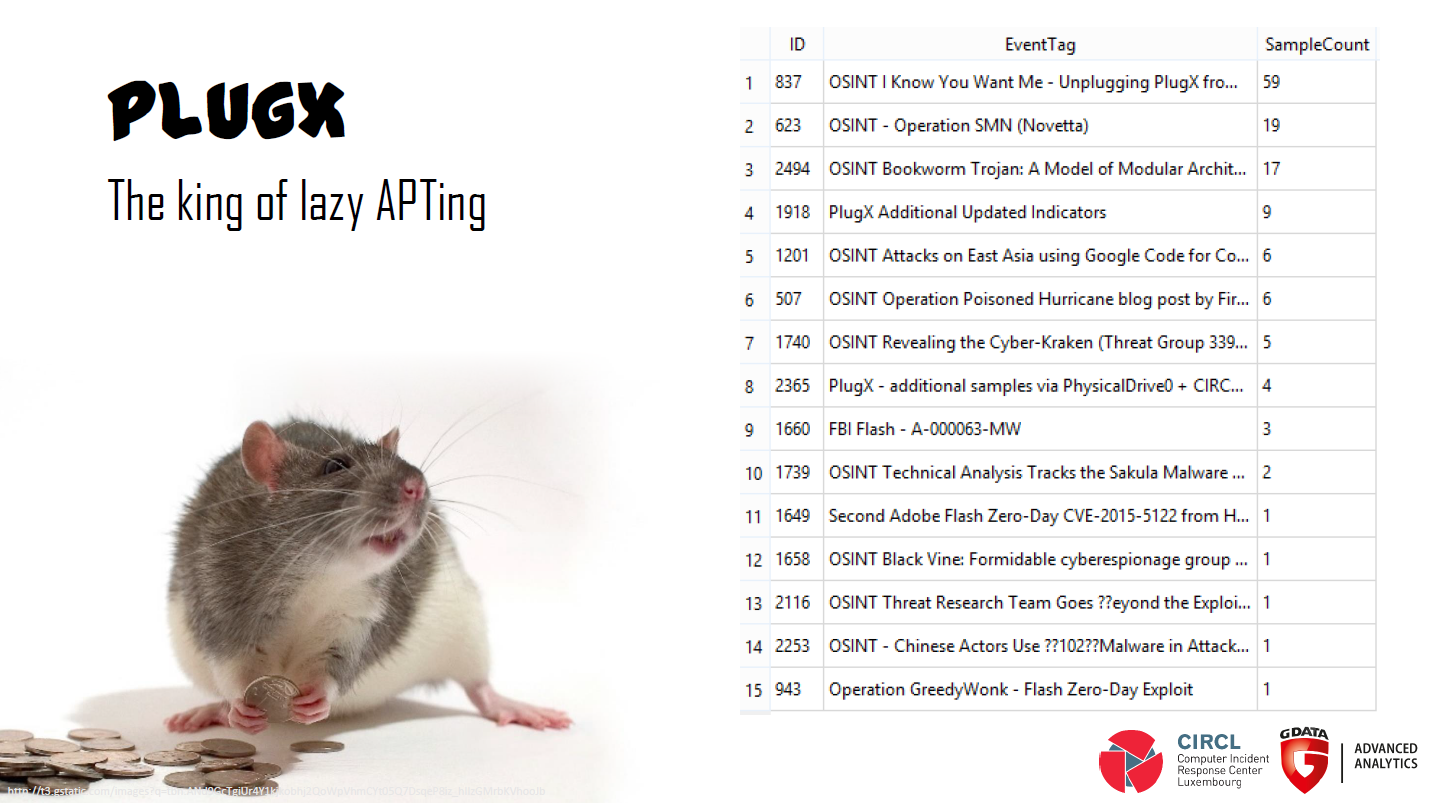

The so to say “lazy king of APTing” is PlugX. The commodity RAT popped up in all together 15 different events listed in the MISP database.

The winner in sample numbers was Adwind, a Java based RAT, dedicated to infect different platforms. Adwind itself is malware, that has been sold under different names, including Frutas RAT and Unrecom RAT. Security firm Fidelis published nice insights on Adwind, under the much appreciated title “RAT in a jar”.

The following graphic shows the total number of RATs and related events, found within our data set of 326 events containing 8.927 malware binaries.

In total, we counted that almost a quarter of inspected events made use of one or another RAT. Looking at the sample set, 1/9th of the total set is composed of pre-built RATs. These numbers are rather high, considering that, at least in the heads of analysts, targeted malware is complex and sophisticated.

Still, though, why do we even bother with high numbers of commodity malware? For one, as mentioned before, they help driving down attack cost. Furthermore, they provide functionality that is quite advanced from the beginning, equipping even unskilled attackers with a Swiss Army Knife of malware they couldn’t implement themselves, even if they tried really hard. These do-it-yourself APT tools enable wannabe spies with little budgets, growing the number of potential offenders.

Furthermore, off-the-shelf RATs have been seen in use by certain advanced attackers. They could lead to confusion about the actual offender, as they do not allow for attribution on the base of the binaries at all. In other words, one would not know whether he is being targeted by a script kiddie or a nation state actor. Currently, it remains unclear whether commodity RATs have been used in an attribution concealment approach, but the assumption does lie close.